By Lance Eliot, the AI Trends Insider

Scaling up is abuzz.

What does it mean to seek or reach scale?

Generally, for start-ups, the notion is that you sometimes start relatively small, perhaps making a prototype or a minimally viable product (MVP), and show it off to gain attention and funding. Potential investors and actual investors are usually of the belief that the one-time version of your product can become a mass-produced one.

This is not always the case or might be exorbitantly costly to achieve.

Something that you might have hand-crafted could be terribly difficult and expensive to recreate and produce on any sizable volume.

Furthermore, your product might work for a handful of situations that you tested, but once it is put into wider use, you could unexpectedly discover that it has limitations or flaws of a fatal kind or that constrain your market potential for the product.

Here’s then the question for the day: Will AI-based self-driving driverless autonomous cars be able to scale?

Many outside the driverless car industry are assuming that if you can make one self-driving car, you can make zillions of them.

This assumption is not necessarily the case.

It is important to clarify what I mean when referring to true self-driving cars.

To be a true self-driving car, the AI needs to drive the car entirely on its own without any human assistance during the driving task.

These driverless cars are considered a Level 4 and Level 5, while a car that requires a human driver to co-share the driving effort is usually considered at a Level 2 or Level 3. The cars that co-share the driving task are described as being semi-autonomous, and typically contain a variety of automated add-ons that are referred to as ADAS (Advanced Driver-Assistance Systems).

There is not yet a true self-driving car at Level 5, which we don’t yet even know if this will be possible to achieve, and nor how long it will take to get there.

Meanwhile, the Level 4 efforts are gradually trying to get some traction by undergoing very narrow and selective public roadway trials, though there is controversy over whether this testing should be allowed per se (we are all life-or-death guinea pigs in an experiment taking place on our highways and byways, some point out).

Since the semi-autonomous cars require a human driver, such cars aren’t particularly important to the scaling question. There is essentially no difference between using a Level 2 or Level 3 versus a conventional car when it comes to a matter of scale.

It is notable to point out that in spite of those dopes that keep posting videos of themselves falling asleep at the wheel of a Level 2 or Level 3 car, do not be misled into believing that you can take away your attention from the driving task while driving a semi-autonomous car.

You are the responsible party for the driving actions of the car, regardless of how much automation might be tossed into a Level 2 or Level 3.

For my framework about AI self-driving cars, see this link: https://aitrends.com/ai-insider/framework-ai-self-driving-driverless-cars-big-picture/

For why achieving AI autonomous cars is considered a moonshot, see my explanation here: https://aitrends.com/ai-insider/self-driving-car-mother-ai-projects-moonshot/

To see details about the levels of autonomous cars, refer to my posting here: https://aitrends.com/ai-insider/reframing-ai-levels-for-self-driving-cars-bifurcation-of-autonomy/

On the dangers of AI self-driving cars being a noble cause that gets out-of-hand, see my explanation here: https://www.aitrends.com/selfdrivingcars/noble-cause-corruption-and-ai-the-case-of-ai-self-driving-cars/

Barriers To Scaling Up

True self-driving cars are chock full of specialized sensors, including cameras, radar, LIDAR, ultrasonic units, and various other advanced electronics.

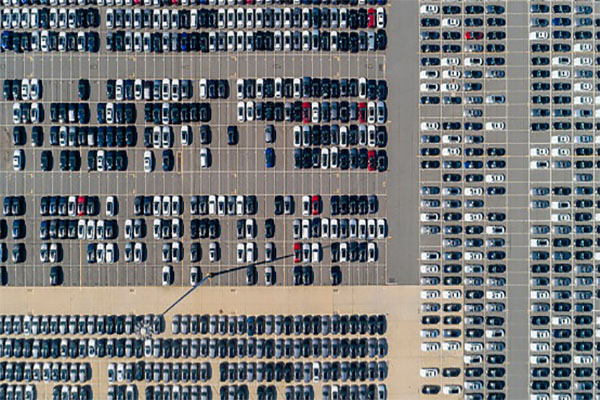

Currently, the driverless cars that are being tried out on our roadways are few and far between, especially when you consider that the number of conventional cars in the United States alone is about 250 million.

The number of (somewhat) self-driving cars is a tiny drop in the bucket of the total car population.

Pundits that believe in a magical future are prone to suggesting that someday soon we’ll have all self-driving cars and no remaining conventional cars. The hope is that by getting rid of all conventional cars, including Level 2 and Level 3 semi-autonomous cars, there won’t be any pesky and car crashing human drivers anymore.

Well, let’s be serious and agree that there’s no economically sound way that we would all overnight discard our conventional cars and instead adopt true self-driving cars.

By magic wand, even if we all did agree to stop using our conventional cars, consider the length of time it would take to ramp-up and produce enough self-driving cars to handle the needed volume of driving in the U.S. alone (approximately 3.12 trillion miles per year).

The scaling aspect is a serious one and not just because of the automobile manufacturing efforts.

Many of the specialized sensors that are being used in today’s experimental self-driving cars are not being sold in the millions upon millions of numbers of units. Thus, those sensor makers would need to scale-up to produce enough of their sensors to fit onto the millions upon millions of self-driving cars that we’ll presumably be seeing built and fielded.

As an aside, the upside potential of riding the self-driving car gravy train is part of the reason that many of the sensor makers are fighting fiercely to get into the experiments taking place today for self-driving cars. Their hope is that by becoming the default sensor for a particular driverless car, their product will surf the massive wave ahead as millions upon millions of self-driving cars are ultimately made and sold.

One important aspect to keep in mind is that the existing experimental tryouts of self-driving cars are being undertaken in a very controlled and small-sized manner.

Once self-driving cars are available in the wild, presumably, the driverless cars will be used as much as possible, running maybe 24 x 7 if possible. This makes sense to get your money’s worth out of the self-driving car. Plus, if demand is going to shoot through the roof for ridership, the odds are that the driverless cars will be in-motion much or most of the time.

Can the sensors being used today on driverless car tryouts handle the rigors of the real world on an ongoing and extensive basis?

We don’t really know if the sensors can handle reality and the hardships of being used all the time.

Pretty much most of the existing driverless car tryouts are being overseen by topnotch maintenance crews. These master crews make sure that the moment a sensor even burps, it gets replaced. Also, when the driverless car comes into the special facility for an evening’s rest, the sensors and other parts of the self-driving car are reviewed to make sure all is in tiptop shape.

It is doubtful that any massive rollout of self-driving cars could entertain the same kind of hand holding.

On a scaling factor, we don’t know if the sensors can scale-up to high usage and the real-world environment of harsh weather, people that bang their shopping carts into your parked driverless car, and the other daily abuses that our everyday cars deal with.

For more about sensors and sensor fusion, see my explanation here: https://www.aitrends.com/ai-insider/multi-sensor-data-fusion-msdf-and-ai-the-case-of-ai-self-driving-cars/

On the topic AI autonomous cars running 24×7, see my indication here: https://aitrends.com/ai-insider/non-stop-ai-self-driving-cars-truths-and-consequences/

For my assessment of the potential extinction of human driving, use this link: https://www.aitrends.com/ai-insider/human-driving-extinction-debate-the-case-of-ai-self-driving-cars/

Consider the importance of Linear Non-Threshold (LNT) in these matters, see my use here: https://www.aitrends.com/ai-insider/linear-no-threshold-lnt-and-the-lives-saved-lost-debate-of-ai-self-driving-cars/

Crucial Scaling Factors

Another scaling factor involves people.

There are some automakers that insist that their self-driving cars will only need to ask a human rider their desired destination, and otherwise, no further interaction is needed.

That’s nonsensical.

Humans riding in today’s Uber and Lyft cars that are driven by a human driver are known for asking and directing the drivers in zillions of varying ways. The need for an actual conversation is important in many ridesharing circumstances.

Can the limited Natural Language Processing (NLP) that an Alexa or Siri provides today be scaled to handle the fluent driving-related conversations that human riders will demand?

Researchers are toiling away at this, but the fluent AI-driver does not yet exist.

Also, what happens when a passenger suddenly starts choking on that leftover T-bone steak that they brought into the self-driving car as a late-night snack?

By removing a human driver from the driverless car, you are also removing the human actions that a driver might render beyond the sole act of driving a car.

Will the notion of not having a human driver be able to scale in the sense that passengers will no longer have a fellow human in the car with them?

For my prediction about new jobs for the use of AI autonomous cars, see this link: https://aitrends.com/ai-insider/future-jobs-and-ai-self-driving-cars/

Here’s my views on what will happen when people sleep in their self-driving cars: https://www.aitrends.com/ai-insider/sleeping-inside-an-ai-autonomous-self-driving-car/

Some people might become addicted to using self-driving cars, see my explanation here: https://aitrends.com/ai-insider/addicted-to-ai-self-driving-cars/

For my explanation about dealing with passenger panic attacks, see this link: https://www.aitrends.com/ai-insider/when-humans-panic-while-inside-an-ai-autonomous-car/

Most of the current tryouts of driverless cars are taking place in confined geographical areas, such as in a restricted area of Phoenix, Las Vegas, San Francisco, and the like.

Will an AI self-driving car readily scale-up to be a good driver in other places?

Some believe that driverless cars can only work properly if the chosen geographic area has been exhaustively mapped and remapped. If that’s the case, the effort and cost to do that mapping might preclude being able to easily have the self-driving car drive in other parts of the country.

Via the use of Machine Learning and Deep Learning, the AI is supposed to get better and better at driving.

Of course, if the self-driving car is only driving in the same place over-and-over, the odds are that it isn’t learning new things about driving that might well be encountered in other locations. This is like a teenage novice driver that gets used to driving in their own neighborhood and then is terrified to drive on open highways or places they’ve never driven on.

One criticism of the U.S. based driverless car efforts has been that the focus on driving in the United States is leading to self-driving cars that are only familiar with U.S. driving aspects, including the legal requirements and the cultural rules-of-the-road elements.

Will the AI systems be scalable to drive in international settings?

Some believe it will be a child’s play and merely the changing of a few parameters in the software, but anyone that has driven in countries around the world knows that it is harder to switch and adjust to foreign driving practices than it might seem on the surface.

For my coverage of international aspects, see the link here: https://aitrends.com/selfdrivingcars/internationalizing-ai-self-driving-cars/

For more about Machine Learning and AI autonomous cars, see my indication here: https://www.aitrends.com/ai-insider/machine-learning-ultra-brittleness-and-object-orientation-poses-the-case-of-ai-self-driving-cars/

It is likely that self-driving cars will need to drive illegally at times, here’s my explanation: https://aitrends.com/selfdrivingcars/illegal-driving-self-driving-cars/

Additional Scaling Elements

An often-quoted estimate provided by Intel suggests that each driverless car will generate at least 4GB of data per day (likely more), based upon all the camera images and video collected, the radar data collected, and so on.

In theory, the data is going to be pushed up to the cloud of the automaker or tech firm that made the AI systems for the self-driving car, a process known as OTA (Over-The-Air) updating.

On a scaling basis, this means a ton of electronic communications across networks, along with a ton of storage that will be needed in the cloud. Multiply 4GB by 250 million cars by 365 days of the year, and the volume is daunting.

Self-driving cars are going to be communicating with each other via V2V (vehicle-to-vehicle) electronic communication. This will be handy since a self-driving car that encounters a cow in the roadway can electronically alert any upcoming driverless cars that are nearing that juncture of a highway.

Today, the use of V2V among self-driving cars is sparse or non-existent.

On a scaling basis, what happens once thousands of self-driving cars are zooming along on a freeway and they are all sending out a bombardment of V2V messages to each other?

For more about V2V and the role of omnipresence, see my indication here: https://aitrends.com/ai-insider/omnipresence-ai-self-driving-cars/

For added coverage about OTA, see my explanation here: https://aitrends.com/ai-insider/air-ota-updating-ai-self-driving-cars/

For processing by supercomputers of AI autonomous car data, see my indication here: https://aitrends.com/ai-insider/exascale-supercomputers-and-ai-self-driving-cars/

Conclusion

Automakers and tech firms are focused on making baby steps right now. Their aim is to produce a self-driving car that can safely drive on our roadways.

Worrying about scale is not quite as crucial, especially if you aren’t even sure that you can get a self-driving car to function at an autonomous level.

This is often the stance of inventors that are making something brand new that has never existed before. They are concerned primarily with getting the darned invention itself to work. They assume or hope that scaling will eventually be possible, though it’s not necessarily at top-of-mind and a matter that they figure can be deferred until it, later on, becomes paramount.

You might be familiar with the famous story about Thomas Edison and the invention of the light bulb, though you’ve likely only heard the version that’s a half-truth.

Edison and his crew tested around 6,000 different materials as the filament for a light bulb.

Light bulbs already existed, but they were generally impractical due to the filament being overly costly and so flimsy that it wouldn’t last very long.

After thousands of trials using different kinds of filaments, he finally found one that was the Goldilocks version, strong enough to last sufficiently and inexpensive enough to be real-world practical.

I tell the story because Edison’s efforts weren’t per se about inventing the light bulb and instead dealt with making a light bulb that could be scalable.

For self-driving cars, we are still in the period prior to having a working light bulb, as it were, and once we do have a working one the race will be on to figure out how it can be scaled-up.

Scale will matter since having self-driving cars that can only work in narrow ways in narrow places will not be much of a moneymaker and perhaps be discounted as toy-like efforts rather than something applicable to the real-world.

Scaling up could be the fly in the ointment of self-driving car emergence.

Copyright 2020 Dr. Lance Eliot

This content is originally posted on AI Trends.

[Ed. Note: For reader’s interested in Dr. Eliot’s ongoing business analyses about the advent of self-driving cars, see his online Forbes column: https://forbes.com/sites/lanceeliot/]