Understanding Robot Embodiment

When we talk about robots, it’s easy to get caught up in the fancy AI or the cool-looking arms. But there’s a whole other layer to consider: how the robot actually exists and interacts with the physical world. This is what we call embodiment.

The Challenge of Physical Interaction

Think about it. Unlike a computer program that lives purely in the digital space, a robot has to deal with gravity, friction, uneven surfaces, and all sorts of unpredictable stuff. This physical reality is a huge part of what makes robotics so much harder than just writing code. You can’t just tell a robot to ‘jump’ and expect it to work perfectly; it needs to understand its own weight, the force it can exert, and how the ground will react. It’s like trying to play a video game where your character suddenly has to deal with real-world physics – things get complicated fast.

Sensors and Actuators in a Closed Loop

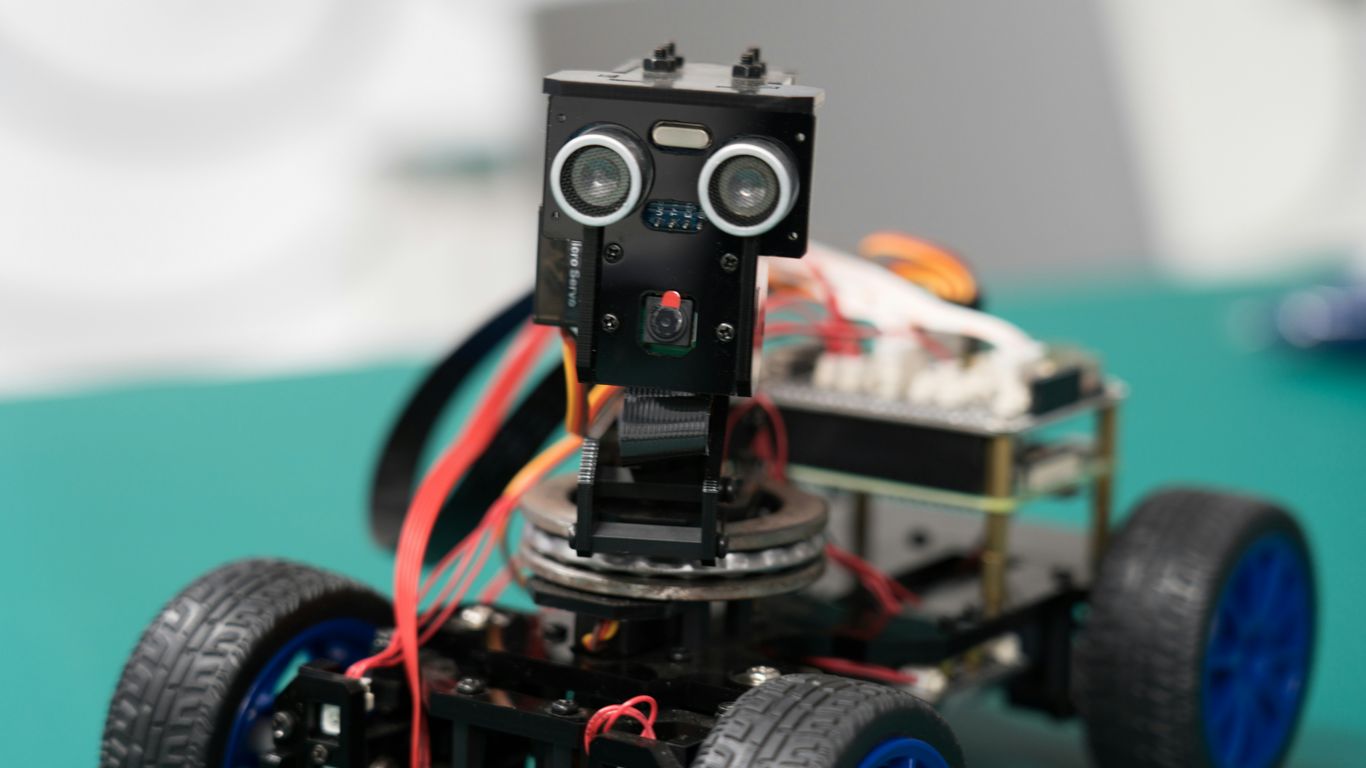

So, how does a robot actually ‘feel’ and ‘act’ in the world? It’s all about sensors and actuators working together in a continuous cycle. Sensors are the robot’s senses – cameras for eyes, microphones for ears, touch sensors, and so on. They gather information about the environment. Actuators are the robot’s muscles – motors, gears, and limbs that allow it to move and manipulate things. The information from the sensors goes to the robot’s ‘brain’ (its computer), which decides what to do. Then, the actuators carry out that action. This creates a loop: sense, think, act, sense again. It’s a constant feedback process.

Here’s a simplified look at that loop:

- Sensing: Gathering data from the environment (e.g., camera sees an obstacle).

- Processing: Analyzing the data and making a decision (e.g., computer determines the obstacle is too close).

- Acting: Executing a command (e.g., motors move the robot’s wheels to stop).

- Feedback: The sensors detect the result of the action (e.g., camera now sees the obstacle is further away or the robot has stopped).

Behaving in the World vs. Knowing

This brings up an interesting point: is a robot truly ‘intelligent’ if it can only perform a specific task in a controlled environment, or does real intelligence come from its ability to adapt and behave effectively in the messy, unpredictable real world? A robot that can navigate a cluttered room, pick up an object it’s never seen before, and react to sudden changes is demonstrating a different kind of intelligence than one that just follows a pre-programmed path on a factory floor. Embodiment forces robots to develop a practical, situated kind of intelligence, one that’s deeply tied to its physical form and its interactions with its surroundings. It’s less about abstract knowledge and more about knowing how to do things in the physical world.

Beyond Appliances: Defining a Robot

It’s easy to get robots confused with everyday gadgets, isn’t it? You see something that moves or has a sensor, and suddenly it’s a robot in some people’s eyes. But that’s not quite right. A thermostat has a sensor and an actuator, sure, but nobody’s calling their fridge light a robot. That’s just not helpful for understanding what a robot really is.

Distinguishing Robots from Simple Machines

Think about it this way: a toaster pops up bread. It does one thing, and it does it the same way every time. A robot, on the other hand, is built for more. It’s not just about doing a single task. It’s about having the ability to handle different situations, to figure things out a bit on its own. The real difference lies in a robot’s capacity for adaptation and interaction with its surroundings. It’s the difference between a tool that performs a fixed job and a system that can adjust its actions based on what’s happening around it.

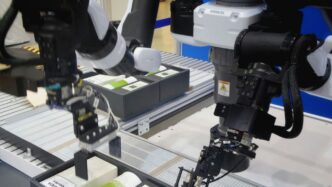

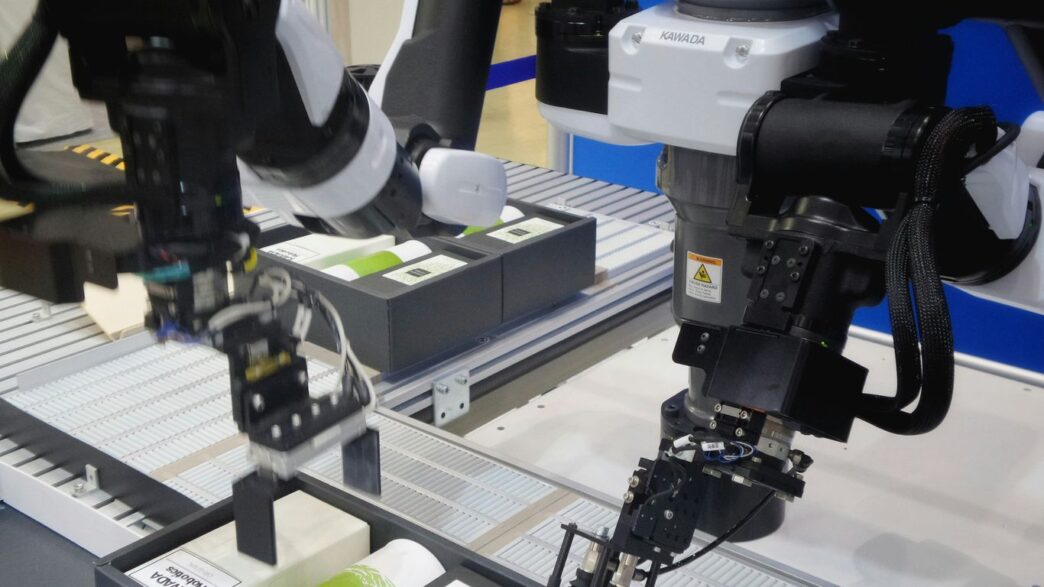

Robots as More Than Industrial Automation

Another common mix-up is thinking robots are only for factory floors. Yes, robots are fantastic at automating tasks in factories, often doing it with more flexibility than older machines. But that’s just one piece of the puzzle. Robotics is a much bigger field. We’ve got robots exploring Mars, far from any assembly line, doing jobs that look nothing like manufacturing. Limiting robots to just industrial automation is like saying humans are only factory workers because some humans work in factories. It misses the vast potential and the wider applications.

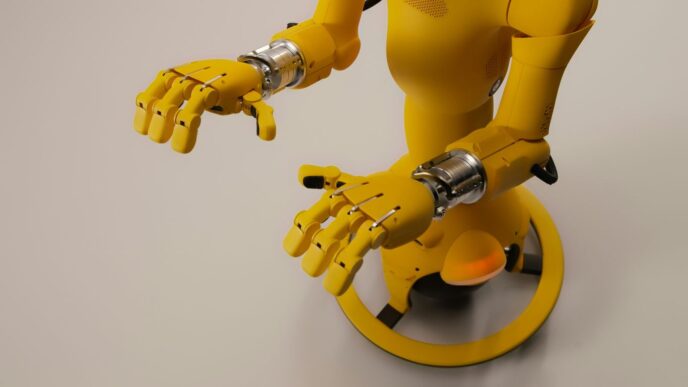

The Concept of Biomorphic Design

Sometimes, the most interesting robot designs take cues from nature. This doesn’t mean building a robot that looks exactly like a human or an animal, though that happens too. It’s more about borrowing strategies that living things use to get around and interact with the world. Think about how a hand can grip all sorts of shapes, or how an animal navigates uneven ground. These biological approaches often lead to robots that can handle unpredictable environments better than machines designed for very specific, controlled settings. It’s about making machines that are more general-purpose and can operate more independently in the real world, not just in a perfectly set-up lab or factory.

The Role of Form Factor in Robot Design

So, we’ve talked about what makes a robot a robot, and how they’re different from just fancy appliances. Now, let’s get into something really interesting: the robot’s body, or its ‘form factor’. It’s not just about looks, though that can be part of it. The shape and structure of a robot have a huge impact on what it can actually do and where it can do it.

Humanoid Robots and Environmental Adaptation

Think about humanoid robots. They look like us, right? This isn’t just for show. Having a body plan similar to ours helps them interact with a world built for humans. They can use tools designed for hands, walk through doorways, and generally fit into our spaces without us having to change everything around them. It’s about making the robot adaptable to our environment, rather than making the environment adapt to the robot. This is a big deal when you consider robots operating outside of controlled factory floors.

Body Morphology and Environmental Comprehension

Beyond just looking human, the actual physical makeup – the morphology – of a robot is key. How it moves, how it balances, how its limbs are shaped – all of this affects how it understands and interacts with its surroundings. A robot with wheels might be great on flat ground, but it’s going to struggle in rough terrain. A robot with legs, or even a more complex system like a rocker-bogie chassis (which is pretty neat, by the way, it lets wheels move up and down independently like a hip joint), can handle bumps and uneven surfaces much better. The physical form dictates the robot’s potential for interaction and its ability to ‘comprehend’ the physical world it’s in. It’s like trying to pick up a delicate object with a giant industrial claw versus a pair of tweezers; the tool’s form dictates its capability.

Learning from Human and Animal Movement Data

Where do designers get ideas for these forms? A lot of it comes from looking at nature. Biology has had millions of years to figure out how to move and survive in all sorts of environments. Studying how humans walk, run, or grasp things, and how animals like insects or dogs navigate complex terrains, provides a wealth of information. This data can inform the design of robot joints, suspension systems, and even how they balance. It’s not about making robots be animals or humans, but about borrowing successful strategies that allow for robust movement and interaction in unpredictable places. For instance, the way a cat lands on its feet or how a spider moves across a web are complex feats that engineers try to replicate in robotic systems.

Key Components for Robot Autonomy

So, what makes a robot a robot, and not just some fancy appliance or a factory arm? It really boils down to how it handles the unexpected. You can’t exactly control every single thing a robot might bump into or have to deal with in the real world. That’s why robots need to be way more adaptable and independent than, say, your washing machine. This means they need general abilities to handle situations that aren’t planned out ahead of time.

General Capabilities in Unscripted Environments

Think about it: if you can’t pre-program every single possible scenario, the robot has to figure things out on its own. This is where general capabilities come in. Instead of being built for just one specific job in a controlled setting, a robot needs to be able to perform a range of tasks in environments that change. It’s like giving a tool a Swiss Army knife’s versatility, but for physical tasks. This adaptability is what separates a true robot from a single-purpose machine.

The Necessity of High Autonomy

Because the real world is messy and unpredictable, robots need a high degree of autonomy. This means they can make decisions and act without constant human input. Imagine a Mars rover. Even if we wanted to, we can’t control it second-by-second because of the time delay in communication. The rover has to be able to make its own choices about where to go and what to do to keep the mission moving. This need for self-direction is a core characteristic of advanced robotics. The further away a robot is, or the more complex its task, the more autonomous it has to be.

Designing for Unpredictability and Ambiguity

When we design robots, we have to assume things won’t go according to plan. This means building systems that can deal with fuzzy information and situations where the ‘right’ answer isn’t obvious. It’s not just about avoiding obstacles; it’s about understanding context and making reasonable judgments. This is why looking at how biological systems, like animals, handle their environments is so helpful. They’ve had millions of years to figure out how to survive and operate in all sorts of unpredictable conditions. We can learn a lot from that when building robots that need to function in the real, unscripted world.

Inspiration from Biological Systems

When we look at robots, it’s easy to get stuck thinking about them as just fancy appliances or industrial tools. But there’s a whole other way to think about them, and it comes from looking at nature. Biology has had billions of years to figure out how to make things work in the real world, and there’s a lot we can learn from that.

Leveraging Biology for Robust Design

Nature is full of incredibly resilient designs. Think about how a bird’s wing is shaped for flight, or how a plant’s roots anchor it against strong winds. These aren’t just random occurrences; they’re the result of evolution favoring designs that can handle unpredictable conditions. Robots designed with biological principles in mind can often be more adaptable and less prone to failure when faced with unexpected situations. Instead of trying to control every single variable in the environment, like a factory machine does, a biologically inspired robot is built to cope with the messiness of the real world. This means focusing on general capabilities rather than highly specialized functions.

Rocker-Bogie Chassis: A Hybrid Approach

Sometimes, the best ideas come from mixing and matching. Take the rocker-bogie suspension system used on Mars rovers. It’s not exactly like an animal’s leg, but it’s also not like a car’s suspension. It uses a clever arrangement of pivoting arms that allows the wheels to stay in contact with uneven ground much better than a traditional setup. This kind of hybrid design, borrowing elements from biological movement while still using mechanical components like wheels, shows how we can create robust mobility solutions. It’s a great example of how we don’t have to copy biology exactly, but can take inspiration from its successes.

Universal Constructors and Self-Replication

One of the most fascinating aspects of biology is its ability to self-replicate and build complex structures from simple instructions. While we’re a long way from robots that can build copies of themselves from raw materials like living organisms, the concept of a ‘universal constructor’ is a powerful idea. It suggests a machine capable of building any other machine, given the right blueprints and resources. This concept pushes us to think about robots not just as tools, but as potential agents of creation and adaptation. It’s a more advanced idea, but it highlights the potential for robots to become much more than we currently imagine, drawing parallels to the fundamental building blocks of life itself.

The Complexity of Robot Software

Hardware vs. Software Challenges

People often think the tough part of building a robot is the physical stuff – the gears, the wires, the motors. And sure, there are definitely challenges there, like making sure everything moves smoothly without getting stuck or breaking down. But honestly, that’s often the less complicated bit. The real head-scratcher, the thing that takes way more time and brainpower, is the software. It’s not just about writing code; it’s about writing code that has to make sense of the real, messy world.

The Demands of Embodied Intelligence

See, a robot isn’t just a computer sitting in a box. It’s got a body. This means it’s constantly interacting with its surroundings through sensors and actuators. It’s not just processing information; it’s acting in the world and dealing with the consequences. This "embodiment" is what makes robot software so tricky. It has to handle all the unpredictable stuff that happens when you’re physically present in an environment, not just floating around in the cloud. This constant feedback loop between sensing, thinking, and acting is the core challenge.

Generalizable Software Frameworks

Because robots have to deal with so many different situations, the software needs to be pretty adaptable. We’re not talking about simple, pre-programmed tasks anymore. We need systems that can figure things out on the fly, even when things don’t go according to plan. This means developing software that can be used across different robots and different tasks, rather than being built for just one specific job. It’s a big ask, and while there are some frameworks out there trying to help, they’re still pretty limited when you consider the sheer variety of what robots might need to do.

Wrapping It Up

So, we’ve taken a look at what makes a robot tick, from its brain to its body parts. It’s more than just a fancy appliance or a sci-fi character. Understanding these basic components helps us see robots for what they really are – complex machines designed to interact with the world. Whether it’s a simple task or something more complicated, these building blocks are key. It’s pretty cool to think about how all these pieces come together to make something move, sense, and act. Keep an eye out, because robots are definitely going to be a bigger part of our lives.