Understanding Artificial Intelligence Fundamentals

So, what exactly is this "artificial intelligence" everyone’s talking about? Think of it as a big umbrella covering a bunch of different ways we try to make machines do things that usually require human smarts. Some of these ideas are pretty old, going back to the 1950s, when folks first imagined machines that could think and act like us. It’s a broad concept, really.

What Constitutes Artificial Intelligence?

At its core, AI is about creating systems that can perform tasks typically needing human intelligence. This can range from simple things like recognizing a face in a photo to complex actions like driving a car or playing chess. It’s not just one thing; it’s a whole field of study.

Machine Learning Versus Deep Learning

Now, within that big AI umbrella, you’ve got machine learning (ML). ML is a specific type of AI that uses algorithms to find patterns in data. Instead of being explicitly programmed for every single scenario, ML systems learn from the information they’re given. It’s like teaching a kid by showing them lots of examples rather than writing down every single rule.

Deep learning (DL) is a subset of machine learning. It’s a bit more advanced because it uses structures inspired by the human brain, called artificial neurons. These "neurons" are organized in layers, allowing the system to learn more complex patterns and representations from data. Think of it as a more sophisticated way for the machine to learn, processing information through multiple stages.

The Role of Neurons in Deep Learning

These "neurons" in deep learning aren’t biological, of course. They’re mathematical functions that take input, process it, and pass it along. In a deep learning model, these neurons are arranged in layers. The first layer might process raw data, like pixels in an image, and pass its findings to the next layer, which might identify edges or shapes. This continues through multiple layers, with each layer building on the previous one to recognize increasingly complex features. This layered approach is what allows deep learning models to tackle very intricate tasks.

Here’s a quick breakdown:

- Artificial Intelligence (AI): The broad concept of machines performing tasks that normally require human intelligence.

- Machine Learning (ML): A type of AI where systems learn from data without explicit programming.

- Deep Learning (DL): A type of ML that uses layered artificial neural networks, inspired by the brain, to learn complex patterns.

Exploring the Mechanics of AI Training

So, how do these AI systems actually learn? It’s not like they go to school or read textbooks. Instead, they’re trained using massive amounts of data. Think of it like this: you show a kid thousands of pictures of cats, and eventually, they learn to recognize a cat. AI does something similar, but on a much, much larger scale.

Automated Training Processes

Forget about humans manually tweaking every little setting. The training process for modern AI is almost entirely automated. Developers set up the basic structure, kind of like designing a blueprint for a building. They decide on the architecture – how many "neurons" (computational units) the system will have and how they’ll be connected. But once the data starts flowing, the AI takes over. It processes the information, compares its predictions to the actual outcomes, calculates any errors, and then adjusts its internal settings – a process called backpropagation – all by itself. This automation is absolutely necessary because the sheer volume of data and adjustments needed is beyond human capacity.

The Significance of Data in AI Learning

Data is the fuel for AI. The more data an AI system is trained on, the better it generally becomes at its task. For simple tasks, a few hundred or thousand data points might suffice. But for complex models, like those that can write text or generate images, we’re talking about scraping huge portions of the internet. This massive scale of data allows the AI to build incredibly rich probability models. It’s not just about having lots of data, though; the quality and relevance of that data are also super important. If you train an AI on biased or incorrect information, it’s going to learn those biases and errors.

Challenges in Training Large-Scale Models

Training these giant AI models isn’t a walk in the park. It takes a serious amount of computing power, often requiring specialized hardware like GPUs, and consumes a lot of energy. Because of this, the cost to train just one of these large models can run into millions of dollars. Big tech companies might train dozens or even hundreds of models a day, which gives you an idea of the staggering expense involved. Even for well-funded research institutions, building a model that’s even a fraction of the size of the leading ones can be a significant challenge. It’s a resource-intensive process that highlights the current dominance of companies with deep pockets in the AI development space.

Navigating AI’s Impact and Applications

Artificial intelligence isn’t just a futuristic concept anymore; it’s woven into the fabric of our daily lives, often in ways we don’t even notice. Think about checking the weather on your phone or getting personalized recommendations online – that’s AI at work. It’s become so common that we barely register it, which is kind of the point, I guess. But beyond these everyday conveniences, AI is starting to make some serious waves in fields like science and healthcare.

Everyday AI Integration

AI is quietly powering a lot of the tools we use daily. It’s in the spam filters that keep our inboxes clean, the navigation apps that guide us through traffic, and even the way streaming services suggest what to watch next. These systems learn from vast amounts of data to predict what we might want or need, making our interactions with technology smoother. It’s like having a helpful assistant that’s always learning.

Transformative Potential in Science and Health

This is where things get really interesting. In science, AI is helping researchers sift through massive datasets to find new patterns, potentially speeding up discoveries. In healthcare, it’s showing promise in areas like:

- Drug Discovery: AI can analyze complex biological data to identify potential new medicines much faster than traditional methods.

- Diagnostics: AI tools are being developed to help doctors spot diseases, like certain cancers, from medical images with greater accuracy.

- Personalized Medicine: By looking at an individual’s genetic makeup and health history, AI could help tailor treatments for better outcomes.

The potential for AI to improve human health and scientific understanding is enormous.

Ethical Considerations and Societal Concerns

Of course, with all this power comes responsibility, and a whole host of questions. As AI becomes more capable, we have to think about the implications. Concerns about privacy are a big one – how is our data being used to train these systems? Then there’s the issue of bias. If the data used to train an AI reflects existing societal biases, the AI can end up perpetuating or even amplifying those unfairnesses. We also need to consider job displacement and the potential for misuse of AI technologies. It’s a balancing act between harnessing the benefits and mitigating the risks.

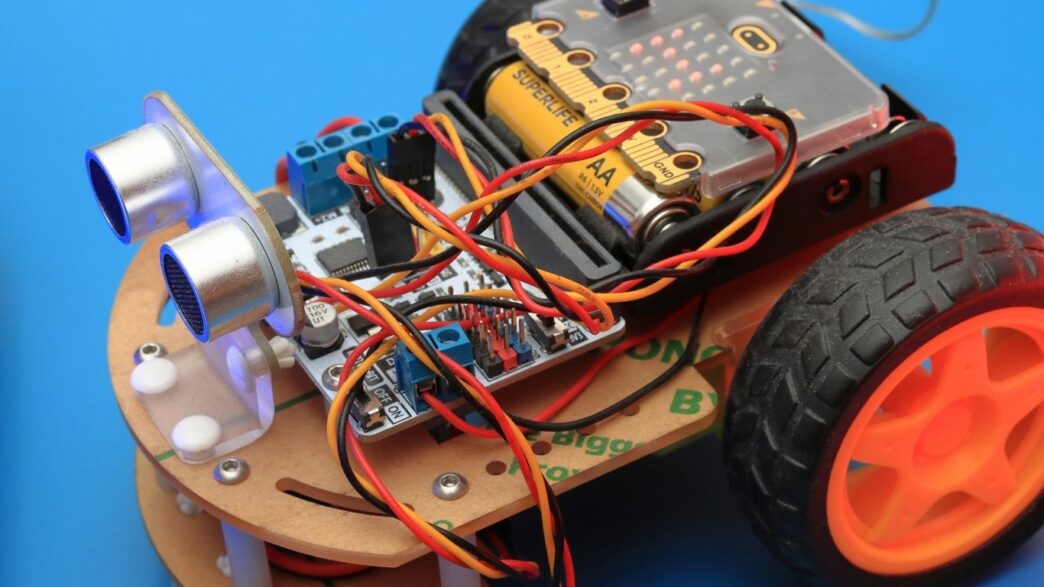

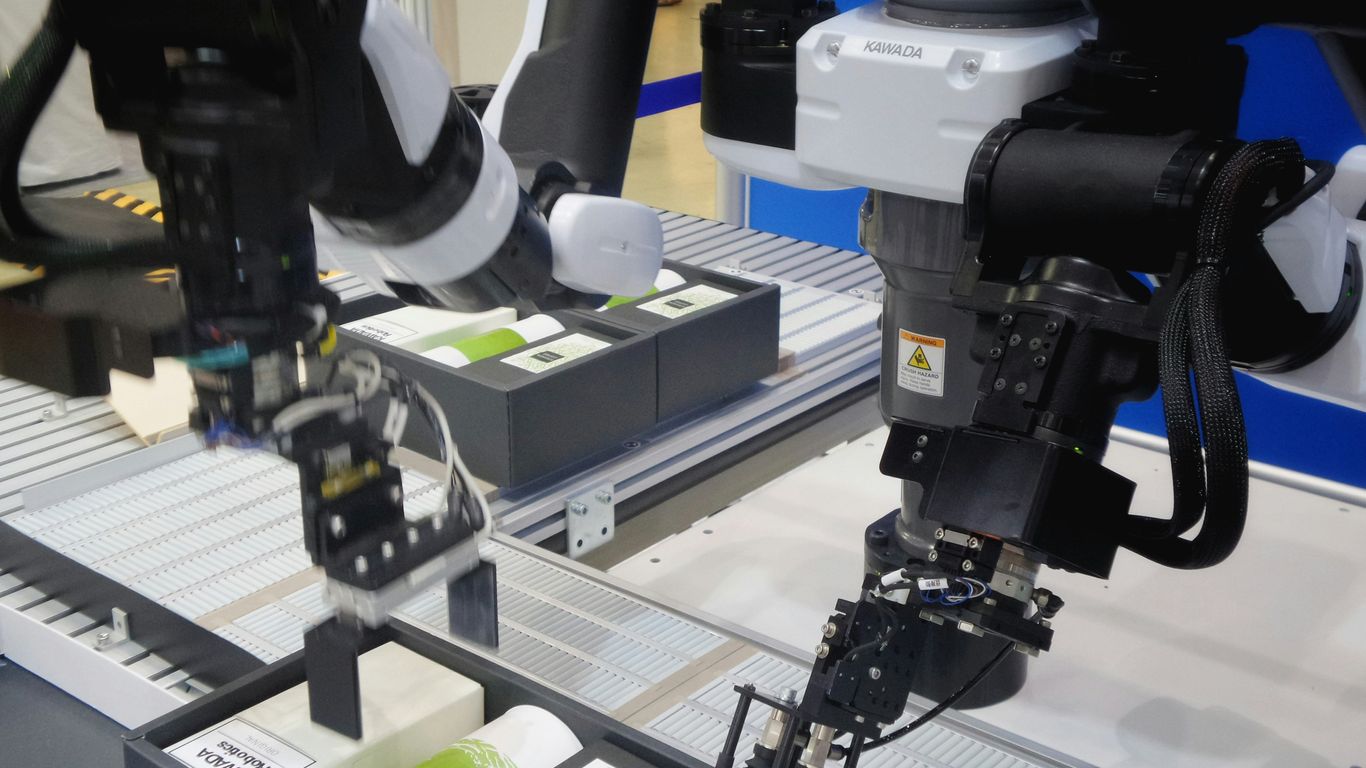

Addressing Common Robotics Questions

When we talk about robots, especially those powered by AI, a few questions pop up pretty regularly. People want to know how these machines make decisions so fast, if we can even tell what they’re doing, and who’s really in charge.

AI for Rapid Decision-Making

One of the big draws of AI in robotics is its speed. Think about situations where a split-second choice can make all the difference, like in defense or emergency response. AI systems can process a huge amount of information and react much faster than a human ever could. This isn’t about simple if-then rules anymore. Instead, these AI models learn from vast amounts of data. Developers set up the basic structure – like how many processing units, or ‘neurons,’ to use and how they’re connected. But the actual learning, where the AI adjusts itself based on errors it finds, happens automatically. This process, often called backpropagation, requires immense computing power and time, even for the most advanced machines. It’s why building these large models is so expensive and energy-intensive.

Detecting AI Actions and Compliance

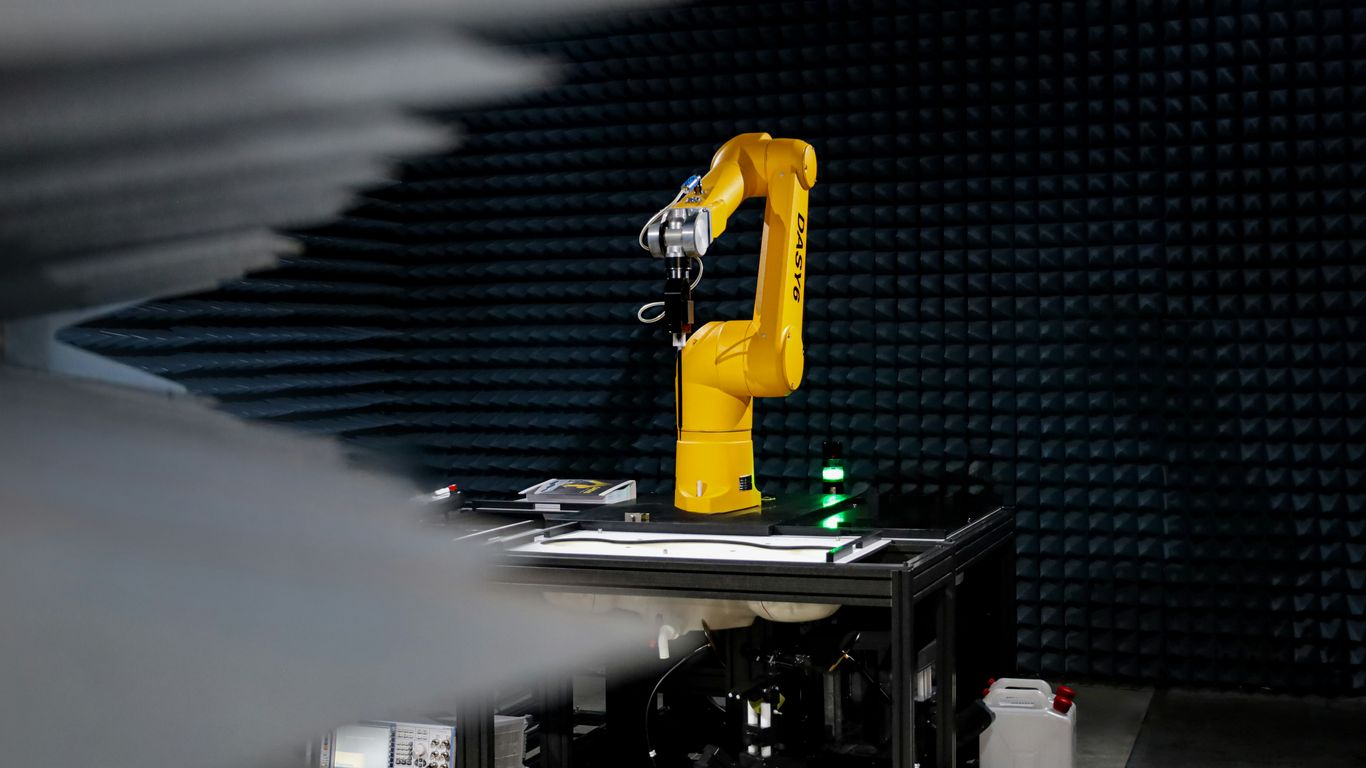

This leads to another tricky question: can we actually tell what an AI is doing, especially if we’re trying to make sure it’s following rules or agreements? The short answer is, it’s complicated. Because the training is automated and the models learn in ways that aren’t always transparent, it’s hard to pinpoint exactly why an AI made a specific decision. It’s not like reading a manual with step-by-step instructions. While developers can design the architecture, the learning itself is a black box to some extent. This makes it difficult to verify compliance with complex regulations or ethical guidelines. We rely on testing and checking the outputs in many different ways to build confidence that the AI is behaving as expected.

The Role of Human Oversight in AI

Given the complexities, human oversight remains incredibly important. Even with AI’s ability to learn and adapt, there are always potential blind spots or failure modes that might not be caught by the developers or even specialized safety organizations. Users, especially educated ones, play a role in identifying these issues. Think about students using AI tools to write code; it’s vital they have good testing practices to ensure the AI-generated code actually works correctly. The dominance of a few large tech companies in developing these powerful AI models also raises concerns. While it’s possible to build specialized AI applications on top of these general models, the broad nature of the underlying AI means we might not know all its potential weaknesses. Therefore, having humans in the loop to monitor, test, and validate AI actions is not just a good idea, it’s a necessity for safe and responsible deployment.

Examining AI Development and Bias

When we talk about how AI is built and where it ends up, it’s easy to overlook some pretty big issues. For starters, a handful of massive tech companies really call the shots. They’ve got the resources, the data, and the top talent, which means they’re the ones shaping a lot of the AI we interact with. This concentration of power means that the AI tools and models that become widespread often come from a very small pool of developers.

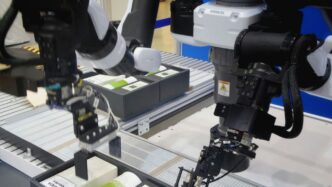

Dominance of Large Tech Companies

It’s not just about who has the most money; it’s about who has the most data. These big players collect vast amounts of information from their users, which is like gold for training AI. This gives them a significant edge in creating more sophisticated and capable AI systems. Smaller companies or research groups often struggle to compete because they just don’t have access to the same scale of data or computing power.

Building Specialized AI on General Models

Often, the path to creating a specialized AI tool involves starting with a broad, general-purpose model. Think of it like taking a really smart, all-around assistant and then teaching them a specific job. This approach can be more efficient than building something from scratch. However, the quality and characteristics of that initial general model heavily influence the final specialized AI. If the base model has flaws, those can easily carry over.

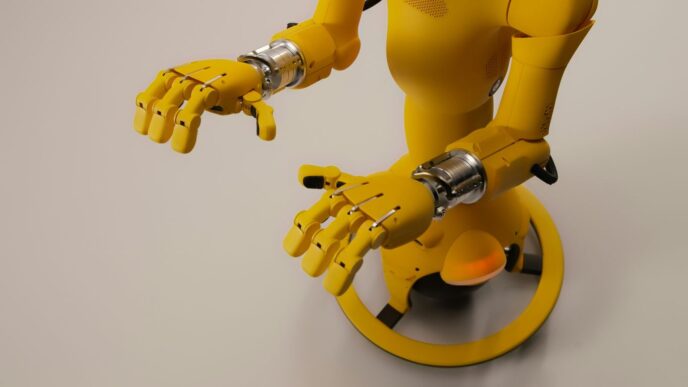

Recognizing and Mitigating AI Biases

This is where things get tricky. AI systems learn from the data they’re fed, and if that data reflects real-world biases, the AI will too. We’ve seen examples where asking for an image of a nurse results in pictures of women, while asking for a CEO or CTO often brings up images of men. This isn’t because the AI is intentionally sexist; it’s because the training data likely contained more images of women in nursing roles and men in leadership positions. Identifying and correcting these biases is a major challenge in AI development. It requires careful examination of training data and ongoing adjustments to the models.

The Impact of Training Data on AI Outputs

What goes in directly affects what comes out. If the data used to train an AI is skewed, incomplete, or contains historical prejudices, the AI’s decisions and outputs will reflect that. For instance, an AI used for hiring might unfairly penalize candidates from certain backgrounds if the historical hiring data it learned from was biased. Addressing this means:

- Auditing Data Sources: Regularly checking where the training data comes from and what it represents.

- Data Augmentation: Creating or sourcing more diverse data to balance out existing imbalances.

- Bias Detection Tools: Using specific software designed to flag potential biases in datasets and model outputs.

- Human Review: Implementing checks where humans review AI decisions, especially in sensitive areas, to catch and correct biased outcomes.

The Future Landscape of AI Policy

Thinking about where AI is headed policy-wise can feel like trying to catch smoke. We’ve got these evolving ideas about what’s right and wrong when it comes to AI, and then we try to pin them down with actual rules. The tricky part is, just like how AI itself changes so fast, our ideas about ethics and norms have to keep up. Policy usually moves at a slower, more thoughtful pace, which is a problem when technology is sprinting ahead. It’s a constant catch-up game.

But here’s the thing: sometimes, it’s the problems we’re facing that push us to adopt new tech. Think about it. If government agencies are still using tools from years ago, and suddenly they’re dealing with things like deepfakes or voice scams, they’re going to have to get up to speed, and fast. Necessity really does drive innovation, even if it’s a bit of a scramble.

Here’s a look at some key areas shaping AI policy:

- Global AI Race: Nations are competing not just in building AI models but also in setting laws. The US is strong in creating foundational models, while countries like China are leading in developing the human talent needed for AI creation.

- Regulatory Approaches: Different countries are trying various methods, from creating laws to implementing containment policies, to manage AI’s growth and impact.

- Bridging the Gap: There’s a significant challenge in connecting the theoretical discussions about AI ethics and policy with the practical realities of implementation. The gap between directives and actual execution, especially with limited personnel capacity, is a major hurdle.

The pace of policy development often struggles to match the rapid advancements in AI technology. This creates a dynamic where regulations might lag behind the capabilities and potential issues of new AI systems.

Accountability and Auditability in AI Systems

When we talk about AI, especially systems that make decisions on their own, figuring out who’s responsible when things go wrong is a big question. It’s not always clear-cut. Think about it like this: if an AI system makes a mistake, who takes the blame? Is it the programmer, the company that deployed it, or the AI itself? This is where accountability comes in. It’s about establishing clear lines of responsibility.

Defining Accountability in AI

Accountability in AI means having a system in place to identify who is answerable for the actions and outcomes of an AI system. This is especially tricky with complex AI that learns and adapts. It’s not like a simple ‘if-then’ computer program anymore. The decisions can emerge from patterns in data that even the creators might not fully predict. So, we need ways to trace back decisions and understand the ‘why’ behind them.

The Importance of Auditability

This is where auditability becomes super important. Auditability is the ability to examine and verify the processes and data that led to an AI’s decision. It’s like having a detailed logbook for the AI. This allows us to:

- Trace Decisions: Understand the specific data points and logic that influenced a particular output.

- Identify Errors: Pinpoint where and why a mistake might have occurred.

- Ensure Compliance: Check if the AI is operating within set rules and ethical guidelines.

- Build Trust: Give users and regulators confidence that the AI is working as intended.

Without auditability, accountability becomes a guessing game. We can’t assign responsibility if we can’t understand how the system reached its conclusion. It’s like trying to figure out who broke a vase without being able to see how it fell.

Bridging Lexicons for Complex AI Discussions

One of the challenges we face is that people often use the same words but mean different things when talking about AI. For instance, one person might think of accountability as a legal concept, while another might see it as the technical ability to audit the system’s actions. This difference in language can lead to misunderstandings. It’s like two people discussing a car repair but one is talking about the engine and the other about the paint job. To have productive conversations about AI governance and safety, we need to make sure we’re all on the same page. This means developing a clearer, shared vocabulary for discussing these complex topics. It requires patience and a willingness to explain our terms, ensuring that when we talk about accountability, we’re also thinking about the technical steps, like auditability, that make it possible.

Wrapping Up Our Robotics Chat

So, we’ve gone through a bunch of common questions about robotics, from how they learn to who’s in charge of them. It’s a pretty wild field, and things are changing fast. We saw how AI models learn from tons of data, not just simple if-then rules, and how that process is super complex and takes a lot of power. We also touched on how these tools are showing up everywhere, from helping with science to just making our daily lives a bit different. It’s clear there’s a lot to think about, both the cool stuff it can do and the tricky parts like safety and who controls it all. Hopefully, this cleared up some of the fog and made these topics a little less mysterious. Keep an eye out, because this is just the beginning of the story.