On February 27, 2026, PointFive launched DeepWaste™ AI, built around a four-layer model for detecting inefficiency across models, tokens, caching, and infrastructure. The company’s differentiator is not just broad connectivity, but a specific way of structuring inefficiency: a four-layer model intended to detect waste across the AI execution stack rather than within a single tool silo.

Why a “Full-Stack” Model Matters

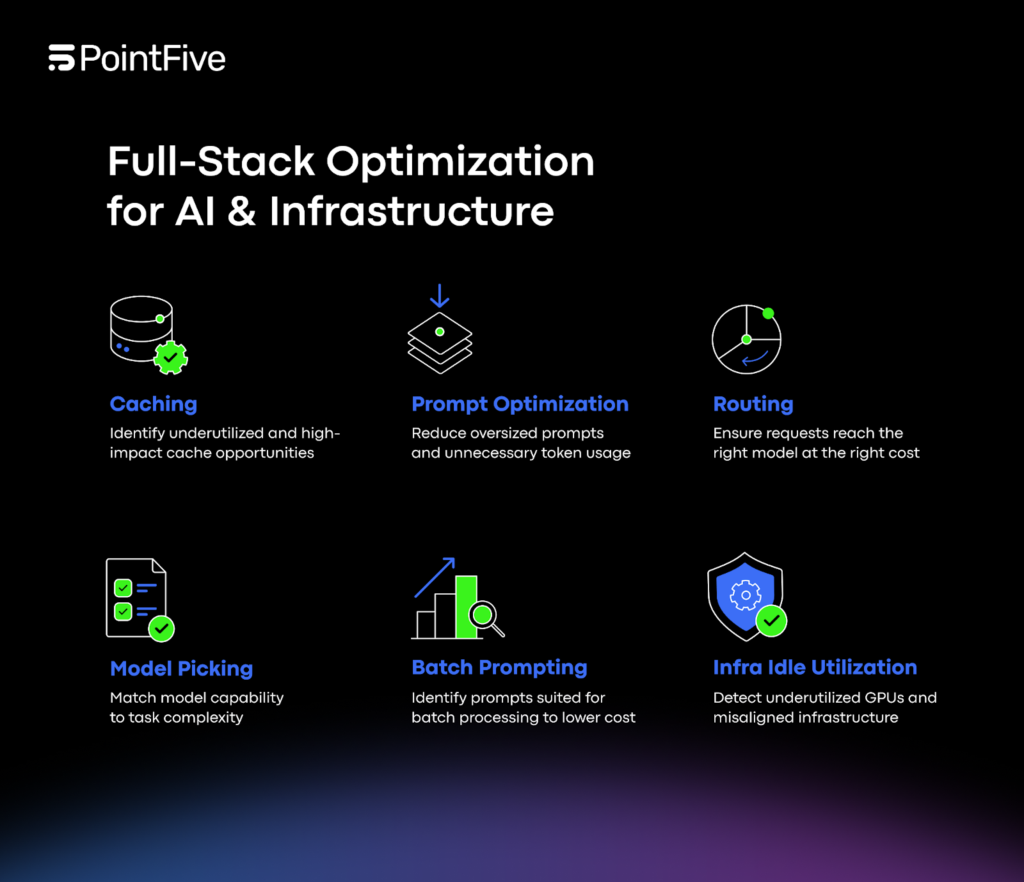

PointFive argues that production AI cost and performance are shaped by interacting layers: model selection, token consumption, routing logic, caching behavior, GPU utilization, retry patterns, and data platform orchestration. In practice, these elements don’t operate independently. Routing affects which model is invoked. Model choice affects token usage patterns. Caching determines whether repeated work is paid for repeatedly. Retries can inflate spend while creating latency outliers. Data platform orchestration influences invocation frequency and batching behavior. Traditional cloud optimization tools, PointFive says, were not built to analyze this AI-specific stack as a system.

DeepWaste AI is presented as a purpose-built module to do exactly that.

Agentless Connectivity as the Foundation

DeepWaste AI connects directly to cloud APIs, LLM service metrics, GPU telemetry, and billing systems without agents, instrumentation, or code changes. By default, it operates using metadata, billing signals, performance metrics, and resource configuration data without requiring raw inference logs. Optional inference-level analysis can be enabled for organizations that want deeper evaluation of prompt architecture and orchestration logic, with customers controlling the depth of analysis.

Layer 1: Model & Routing Intelligence

The first layer focuses on whether tasks are served by the right model in the right mode. PointFive lists signals such as model-task mismatch, routing downgrade opportunities, batch versus real-time routing misalignment, and workload benchmarking outliers. The practical implication is that systems can be accurate yet inefficient: routing logic may default to a larger model than necessary, or real-time routing may be used where batching is more appropriate. DeepWaste AI is designed to surface these mismatches as optimization opportunities grounded in workload signals.

Layer 2: Token & Prompt Economics

The second layer targets token-driven cost and performance patterns. PointFive highlights prompt bloat, context window overprovisioning, output inflation from misconfigured max_tokens, parameter-task misalignment, and structural token waste patterns. This layer reflects a production truth: tokens are both cost and latency. Overly large prompts and context windows can waste budget, while output settings can inflate usage beyond what a task requires. DeepWaste AI is designed to detect these patterns as systematic, repeatable inefficiencies.

Layer 3: Caching & Reuse Optimization

The third layer addresses repeated work. DeepWaste AI detects duplicate inference, underutilized native caching capabilities, and cache miss rate inefficiencies. In production systems with repeated prompts, shared workflows, or common queries, caching and reuse can dramatically alter cost. PointFive’s framing is that the gap is often not “no caching exists,” but that caching is underused or misaligned with real workloads, resulting in repeated inferences that could have been avoided.

Layer 4: Infrastructure & Operational Leakage

The fourth layer focuses on the infrastructure and operational patterns that inflate cost or degrade performance: idle GPUs, instance-type mismatch, driver-level throughput limitations, retry-driven cost inflation, latency outliers, and provisioning misalignment. PointFive notes that DeepWaste AI also optimizes GPU infrastructure by identifying underutilized or idle GPUs, instance-type mismatches, OS and driver misconfigurations, and hardware-to-workload misalignment. This layer ties system behavior to the reality of how infrastructure is configured and utilized over time.

Coverage Across Providers and Platforms

DeepWaste AI provides native connectivity across AWS (Bedrock, SageMaker, and AI managed services), Azure (Azure OpenAI, Azure ML, Cognitive Services), GCP (Vertex AI and AI services), plus OpenAI and Anthropic direct APIs. It also extends optimization across AI data platforms with native support for Snowflake and Databricks, covering workflows from data ingestion through inference.

Turning AI Efficiency Into an Operating Discipline

PointFive says each finding includes a quantified savings estimate and implementation guidance, prioritized by impact and mapped to engineering and FinOps workflows. “AI workloads introduce a new category of operational complexity,” said Alon Arvatz, CEO of PointFive. “DeepWaste AI gives organizations the intelligence required to scale AI efficiently, across models, infrastructure, and data platforms, without sacrificing control.”

DeepWaste AI is now available to PointFive customers.