IBM Quantum Unveils New Processors and Software Advancements

IBM is really shaking things up in the quantum world, announcing some pretty big leaps forward with their latest hardware and software. It feels like we’re getting closer to those "quantum advantage" moments we keep hearing about.

Introducing IBM Quantum Nighthawk Processor

First up is the IBM Quantum Nighthawk processor. This isn’t just another chip; it’s built with the idea of achieving quantum advantage in mind. Think of it as a specialized tool designed to tackle problems that are just too much for even the most powerful regular computers. IBM says Nighthawk is expected to be available to users by the end of 2025 and should allow for circuits that are about 30 percent more complex than what we’ve seen before. This processor is a key piece of IBM’s plan to show that quantum computers can solve real-world problems faster than classical ones.

IBM Quantum Loon: A Step Towards Fault Tolerance

Then there’s IBM Quantum Loon. This one is more experimental, but it’s showing off all the necessary parts for what’s called "fault-tolerant" quantum computing. That’s a big deal because it’s about making quantum computers reliable enough to handle complex calculations without errors messing things up. Loon is testing out a new way to build these systems, including ways to connect qubits that are further apart on the chip and even reset them between calculations. It’s all about building the foundation for quantum computers that can actually be trusted for serious work.

Qiskit Enhancements for Accuracy and Efficiency

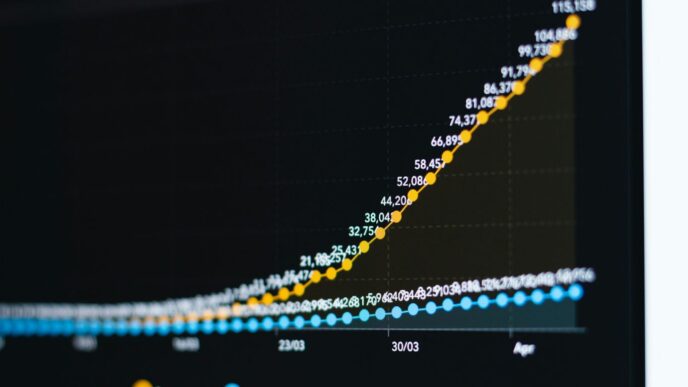

It’s not just about the hardware, though. IBM is also improving its Qiskit software. They’ve added new features that are making quantum circuits more accurate and efficient. For instance, they’re seeing a 24 percent jump in accuracy with "dynamic circuits" and making it way cheaper – over 100 times less expensive – to get accurate results using high-performance computing for error mitigation. They’ve also made progress on quantum error correction decoding, which is a year ahead of schedule. This means the software side is keeping pace, making it easier for researchers to actually use these powerful machines.

Accelerating Quantum Development and Fabrication

So, IBM’s really stepping up its game when it comes to actually building these quantum computers. It’s not just about the theory anymore; they’re making big moves in how they make the hardware.

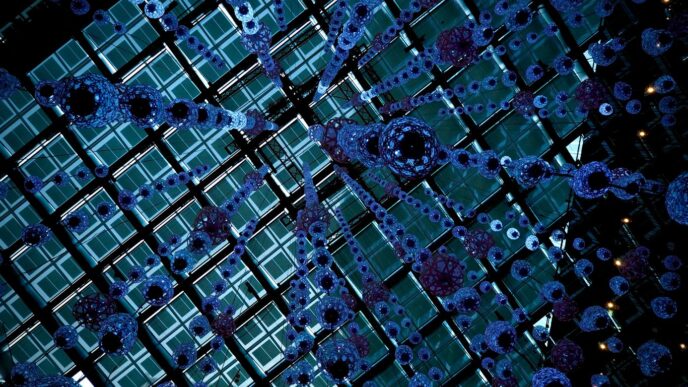

Transition to 300mm Wafer Fabrication

This is a pretty big deal. IBM is now making its quantum processor wafers at a 300mm fabrication facility. Think of it like moving from a small workshop to a much bigger, more advanced factory. This upgrade means they can produce more chips, and importantly, they can do it faster. They’re using some really cutting-edge tools in this new facility, which helps them learn and improve the processors much quicker than before. It’s all about getting more complex chips made efficiently.

Doubling Research and Development Speed

Because they’ve moved to this bigger fabrication setup, IBM is seeing a significant speed-up in their R&D. They’re cutting down the time it takes to build each new processor by at least half. This means they can test out new ideas and designs much more rapidly. Imagine being able to try out twice as many experiments in the same amount of time – that’s the kind of acceleration we’re talking about here. It really helps them push the boundaries faster.

Increasing Physical Complexity of Quantum Chips

This new fabrication process isn’t just about speed and quantity; it’s also about making the chips themselves more intricate. IBM is now able to achieve a ten-fold increase in the physical complexity of their quantum chips. What does that mean? Well, it allows them to pack more qubits and connect them in more sophisticated ways. This is absolutely key for building the kind of complex systems needed for things like fault-tolerant quantum computing, where you need lots of qubits working together precisely. They can now explore multiple chip designs at the same time, which is a huge advantage for innovation.

Progress Towards Quantum Advantage and Fault Tolerance

IBM is making some serious moves towards getting quantum computers to a point where they can actually solve problems better than regular computers, and also towards building machines that can correct their own errors. It’s a big deal, and they’ve got some clear targets.

Quantum Advantage Targeted for 2026

So, the big goal is to hit "quantum advantage" by the end of 2026. What does that mean? It’s basically the moment a quantum computer can tackle a problem that even the most powerful classical supercomputers can’t handle efficiently. Think of it like a race where the quantum car just pulled way ahead. IBM is working with others, like BlueQubit and Algorithmiq, to track these moments. They’re contributing experiments to a public tracker to make sure these claims are solid and to compare quantum approaches with the best classical methods out there. It’s not just about building the machine; it’s about proving it can do something new and useful.

Fault-Tolerant Quantum Computing by 2029

Beyond just being faster for certain problems, IBM is also pushing hard for "fault-tolerant" quantum computing, aiming for 2029. This is where things get really interesting for long-term, complex tasks. Fault tolerance means the quantum computer can actively correct errors that pop up during calculations. These errors are a huge headache right now, but they’re essential to fix if we want to run really long and complicated quantum programs. IBM has shown they have the hardware pieces needed to make this happen, like with their IBM Quantum Loon demonstrations. It’s like building a bridge that can repair itself as you cross it.

Key Components for Error Correction Demonstrated

To get to that fault-tolerant future, IBM has been showing off the building blocks. They’ve demonstrated efficient ways to decode quantum error correction, which is a fancy way of saying they’ve found faster methods to figure out and fix errors. They even managed to do this a year ahead of schedule, which is pretty cool. Plus, they’re making their Qiskit software better, allowing for more complex circuits and using high-performance computing (HPC) to cut down the cost of getting accurate results from these error-prone machines. It’s all about making sure the quantum calculations are reliable, even when the hardware isn’t perfect yet.

Innovations in Quantum Algorithms and Error Mitigation

So, what’s new on the algorithm and error correction front? It turns out IBM has been busy making quantum computers more reliable and useful. They’re working on ways to make sure the answers we get from these machines are actually correct, which, as you can imagine, is pretty important.

Efficient Quantum Error Correction Decoding

One of the big hurdles in quantum computing is dealing with errors. Qubits are delicate things, and they can get messed up easily. IBM has made some serious headway here, achieving efficient quantum error correction decoding. This means they’ve figured out a faster way to figure out what went wrong and fix it. They actually hit a 10x speedup compared to the current best methods, and they did it a year ahead of schedule. That’s pretty wild.

HPC-Powered Error Mitigation Techniques

Beyond just fixing errors after they happen, IBM is also using powerful classical computers, known as High-Performance Computing (HPC) systems, to help out. They’ve integrated these HPC capabilities into Qiskit, their quantum software development kit. This new setup lets them do error mitigation in a way that’s way cheaper – over 100 times less expensive – to get accurate results. They’ve even added a C++ interface to Qiskit, so people working with existing HPC setups can jump right in and use quantum programming.

Dynamic Circuit Capabilities in Qiskit

Qiskit itself is getting a serious upgrade. IBM is expanding its dynamic circuit capabilities, which basically means the quantum circuits can change on the fly during computation. This is a big deal for accuracy. On systems with over 100 qubits, they’re seeing a 24 percent jump in accuracy. This gives developers more control and makes the whole process more robust. These advancements are key to moving from noisy, intermediate-scale quantum devices to machines that can actually solve problems we care about.

IBM’s Vision for Scalable Quantum Computing

A Holistic Approach to Quantum Scaling

IBM isn’t just thinking about making quantum computers bigger; they’re looking at the whole picture. It’s like building a city, not just a single skyscraper. They’re working on everything from the actual chips to the software that runs on them, and even how they’re made. This means they’re not just adding more qubits, but also making sure those qubits can talk to each other better and that the whole system is more reliable. They believe this all-in approach is the only way to really make quantum computing useful for big problems.

Enabling Transformative Quantum Applications

So, what’s the point of all this scaling? IBM sees quantum computers tackling some seriously tough challenges. Think about discovering new medicines, creating advanced materials, or even optimizing complex financial systems. These are problems that today’s best computers struggle with. By scaling up their quantum systems, IBM aims to provide the tools needed to make these kinds of breakthroughs a reality. They’re even planning to add more specialized software tools by 2027, focusing on areas like machine learning and optimization to help solve things like difficult equations.

Commitment to Trust and Transparency

As quantum computing moves forward, IBM is also focused on being open about their progress. They’re working with partners to track and verify claims of quantum advantage, which is basically when a quantum computer does something a regular computer can’t. This helps build confidence in the technology. They’re also making their software, Qiskit, more accessible, even adding a C++ interface so people working with high-performance computing can use it more easily. This openness is key to growing the whole quantum community.

Wrapping Up

So, IBM is really pushing forward with quantum computing, making their processors faster and more complex. They’re also working on ways to fix errors, which is a big deal for making quantum computers actually useful. It feels like things are moving pretty quickly, and they’re aiming for some major milestones in the next few years. It’s exciting to see how this technology develops and what new problems it might help us solve down the road. Keep an eye on this space, because it’s definitely one to watch.