For a long time, the transition from monolithic systems to microservices was presented as a straightforward sign of architectural progress. Current research supports a more restrained view. Migration produces sustainable results when it is organized as a sequence of boundary decisions, data redesign choices, interface controls, and governance arrangements. Phased modernization usually works better than abrupt decomposition because it reduces operational shock and exposes structural problems before they spread across a distributed system.

Start with the monolith that actually exists

The first question in refactoring is not which service to extract first. The more useful question concerns the monolith’s internal condition. In production environments, a monolith usually represents an accumulation of tightly connected business rules, transaction paths, release practices, database dependencies, and ownership habits. Refactoring without architectural diagnosis often leads to a cosmetic decomposition in which former in-process dependencies reappear as remote calls.

A serious migration methodology begins with a reading of dependency density, domain cohesion, runtime bottlenecks, release friction, transaction chains, and the separation between stable and volatile business capabilities. Decomposition quality depends heavily on whether boundaries are reconstructed before code distribution begins. At the early stage, the real question is whether the system and the organization are prepared for controlled separation.

The real target is controllability

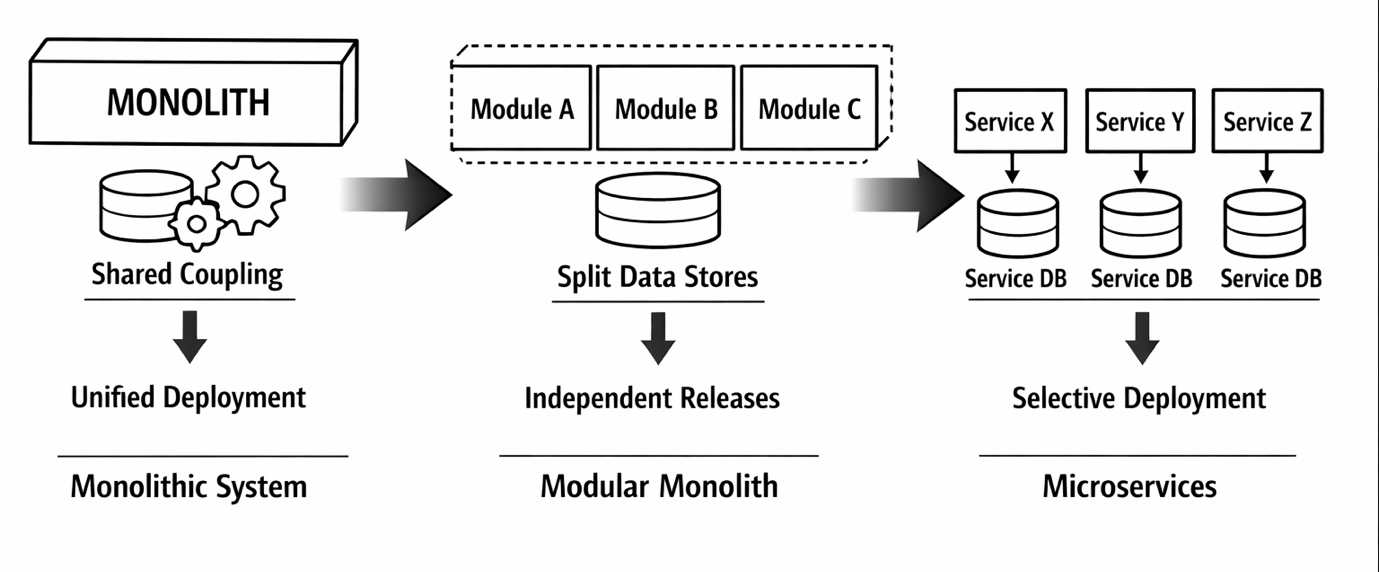

Microservices are often described as the destination, but the stronger target is architectural controllability. In many systems, that target is reached more reliably through an intermediate modular monolith. The modularization phase already demands substantial effort and can affect performance before remote service communication appears at all. That observation changes the logic of migration planning. The first burden is not distributed deployment. The first burden is boundary repair.

A modular monolith works as a lower-risk proving ground. It allows teams to test domain slices, internal contracts, release autonomy, and ownership rules while the application remains within a single deployable unit. In practice, a modular monolith works as an intermediate architecture between legacy entanglement and fully distributed services.

Figure 1 visualizes this phased trajectory. On the left, the system appears as a tightly coupled monolith with shared data access, cross-cutting dependencies, and synchronized releases. In the center, the same application is reorganized into explicit modules with clearer contracts and sharper ownership. On the right, only the modules under genuine pressure for independent scaling, faster change, or technology divergence are extracted into microservices. The logic behind the figure is straightforward: distribution works better when it follows successful modularization.

Not every domain deserves early extraction

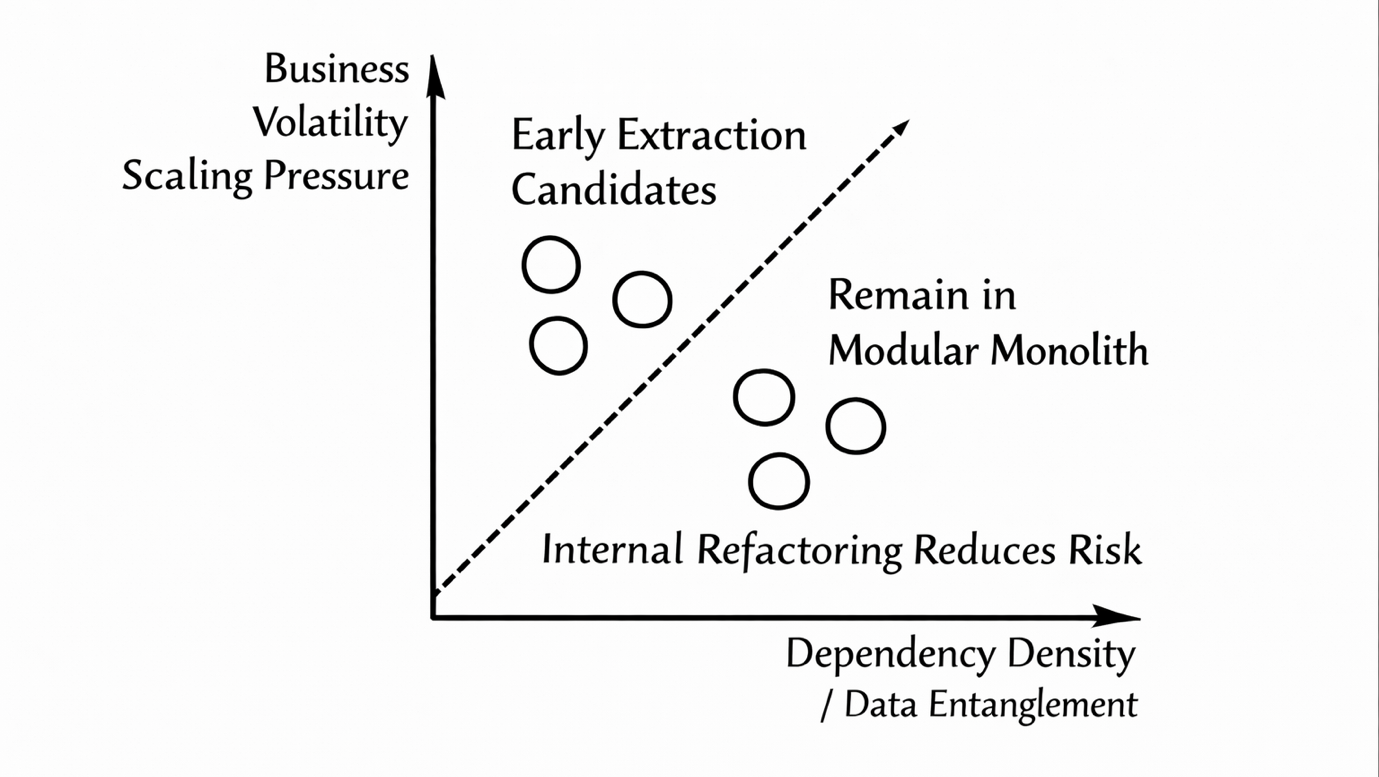

Once the application has been structurally analyzed, the next task is candidate selection. Early extraction works best when the business purpose is clear, ownership is stable, scaling pressure is visible, and interfaces can be stabilized without pulling large parts of the monolith along. By contrast, heavily transactional components, deeply shared schemas, and workflows that depend on implicit in-process coordination usually produce fragile services when extracted too early.

Better candidates emerge when business logic, interface responsibility, and data ownership align. In microservice settings, bounded contexts remain a practical device for boundary discovery. Service discovery still depends on disciplined interpretation of domain inputs, technical dependencies, and migration constraints.

Figure 2 presents the selection logic as a two-axis field. One axis reflects business volatility and scaling pressure. The other reflects dependency density and data entanglement. Components in the high-pressure, low-entanglement zone become realistic candidates for early extraction. Components in the low-pressure, high-entanglement zone remain inside the modular monolith until internal restructuring reduces migration risk. The value of this figure lies in practical discrimination: migration planning becomes unstable when attractive domains are not distinguished from dangerous ones.

Data migration decides the pace of the transition

Database design is often where elegant migration plans lose contact with operational reality. Most monoliths were built on shared persistence, broad joins, and implicit assumptions about consistency across business areas. For that reason, data strategy belongs in the center of the methodology. Transitional designs usually rely on a period in which legacy and new persistence models coexist while write paths are gradually redirected and ownership becomes more explicit. In practice, decomposition, data redesign, and communication architecture have to be coordinated.

Coexistence is an engineering stage

In enterprise systems, the monolith and emerging services often coexist longer than expected. During that period, migration becomes an exercise in coexistence engineering. Teams need API mediation, anti-corruption layers, contract translation, event publication rules, rollback paths, and observability points that make hybrid operation manageable under production pressure. This staged coexistence model allows teams to gradually reallocate responsibilities instead of forcing a single disruptive transfer.

Table 1. Coexistence architecture for phased migration with API mediation, event flow, and progressive data ownership transfer (compiled by the author based on his own research)

| Migration layer | Main function during coexistence | Typical design concern |

| Monolith core | Continues to serve legacy workflows and tightly coupled transactional paths | Preserving stability while internal modules are re-bounded |

| API mediation layer | Routes calls between legacy modules and extracted services | Preventing interface breakage and hidden dependency spread |

| Contract translation layer | Aligns legacy payloads and service-specific contracts | Reducing semantic mismatch between old and new interfaces |

| Event publication layer | Moves selected interactions off synchronous request paths | Supporting eventual consistency without losing traceability |

| Observability layer | Tracks failures, latency, retries, and cross-boundary behavior | Making hybrid runtime behavior diagnosable |

| Transitional data layer | Supports temporary coexistence of shared and service-owned persistence | Avoiding uncontrolled duplication and write conflicts |

| Service-owned stores | Gradually receive isolated write responsibility from the monolith | Establishing real ownership |

API evolution can silently restore coupling

Even when service boundaries look clean on diagrams, API evolution can gradually recreate the same tightness that migration was supposed to remove. Microservice API evolution usually relies on backward compatibility, versioning, and close collaboration between teams. At the same time, persistent problems remain in change-impact analysis, communication of interface changes, and consumer reliance on outdated versions. Those issues produce design degradation over time.

For phased migration, this means extraction alone is insufficient. Every new service needs an explicit versioning policy, deprecation windows, consumer mapping, and structured feedback between producers and consumers. Without that governance, distributed systems simply shift coupling into a larger, less transparent network of contracts.

Team structure shapes the technical outcome

Microservices are frequently described in technical terms, yet the evidence base repeatedly returns to ownership, delivery coordination, quality assurance, and long-term evolvability. Organizations often struggle with decentralized governance, runtime accountability, and the cost of preserving architectural flexibility over time. These problems matter because many transformations weaken socially before they fail technologically.

A phased methodology needs governance checkpoints. Each module requires a visible owner. Interface changes need an approval path. Incident handling must cross team boundaries without ambiguity. Architectural drift needs regular detection. Migration success is closely tied to organizational preparedness.

Tools help, judgment still decides

Automation in migration has become more capable. Static, dynamic, hybrid, and artifact-driven techniques can all support service discovery and decomposition analysis. Ontology-based mappings help organize microservice identification choices and inputs more systematically. Unresolved problems still remain in behavioral equivalence, decomposition quality assessment, and database partitioning. Tools reduce blindness, but they do not remove ambiguity.

That limitation matters because migration always involves architectural decisions that remain. Teams still have to determine where business boundaries truly hold, which dependencies are acceptable during transition, and which inconsistencies the business is prepared to tolerate. The strongest methodology treats automation as support for architectural reasoning.

A disciplined end state is better than an ideological one

The most reliable methodology for refactoring monolithic server systems is conservative in the productive sense of that word. It exposes the monolith’s real structure before splitting it. It repairs internal boundaries before moving them across the network. It extracts only those domains that can justify the operational cost of independence. It handles data redesign as a primary architectural track. It installs interface governance before service sprawl becomes normal. It accepts that, in some organizations, the best end state is a strong modular monolith.

That position is supported quite consistently across current work on migration. Stepwise migration, API evolution, readiness assessment, and modular monolith design all point in the same direction: durable modernization depends less on maximizing the number of services and more on building a system that changes predictably under business pressure.