So, AI in healthcare, right? It sounds like the future, and in many ways, it is. But like anything new and powerful, it’s not all smooth sailing. We’re seeing a lot of talk about how AI can help doctors and patients, but it’s really important to look at the other side of the coin too. There are definite downsides of AI in healthcare that we need to be aware of. It’s not about stopping progress, but about making sure we’re doing it smartly and safely, and not just jumping in headfirst without thinking about the potential problems. Let’s break down some of the main concerns.

Key Takeaways

- AI can sometimes be unfair, making health problems worse for certain groups of people because the data it learned from wasn’t balanced.

- When computers make decisions, it’s hard to know who’s responsible if something goes wrong, especially when we don’t fully understand how the AI arrived at its conclusion.

- Getting AI systems set up and keeping them running costs a lot of money, and not all hospitals or clinics can afford it, creating a gap in who gets the benefits.

- There’s a worry that doctors might start relying too much on AI, potentially losing some of their own thinking skills or the ability to connect with patients on a human level.

- Keeping patient information safe is a big challenge, as AI systems need lots of data, making them targets for hackers and increasing the risk of private details getting out.

Ethical and Societal Implications of AI in Healthcare

Algorithmic Bias and Health Disparities

It’s a bit worrying, isn’t it, how AI can sometimes end up making things worse for certain groups of people? This happens because the data used to train these systems might not represent everyone equally. If the data is skewed, perhaps with too many records from one demographic and not enough from others, the AI can learn to make decisions that favour the overrepresented group. This can lead to some patients getting less accurate diagnoses or treatments simply because they don’t fit the mould of the majority in the training data. It’s like trying to teach a computer about the whole world using only a small, incomplete map.

- Underrepresentation: Certain ethnic groups or genders might have fewer data points, leading the AI to perform poorly for them.

- Historical Bias: Data can reflect past societal inequalities, which the AI then picks up and perpetuates.

- Feature Selection: The way data is chosen and organised can inadvertently introduce bias.

The risk here is that AI, instead of levelling the playing field, could actually widen existing gaps in healthcare access and outcomes, creating new forms of inequality.

Erosion of Human Empathy and Patient Experience

When we go to the doctor, we’re not just looking for a diagnosis; we want to feel heard and understood. There’s a real concern that as AI takes on more roles, the human touch might get lost. Imagine a system that just spits out probabilities or treatment plans without any real consideration for how a patient is feeling or their personal circumstances. It could make healthcare feel very cold and impersonal. This shift could fundamentally change the patient-doctor relationship, making it less about care and more about data processing.

Job Displacement and Workforce Anxiety

Lots of people are worried about AI taking their jobs, and it’s understandable. In healthcare, roles that involve repetitive tasks or data analysis might be the first to be affected. While some argue AI will create new jobs or change existing ones, the transition period can be tough. There’s a lot of uncertainty about what the future workforce will look like and whether people will have the right skills. This anxiety can affect morale and make it harder to adopt new technologies when staff are worried about their livelihoods.

Data Governance and Security Challenges

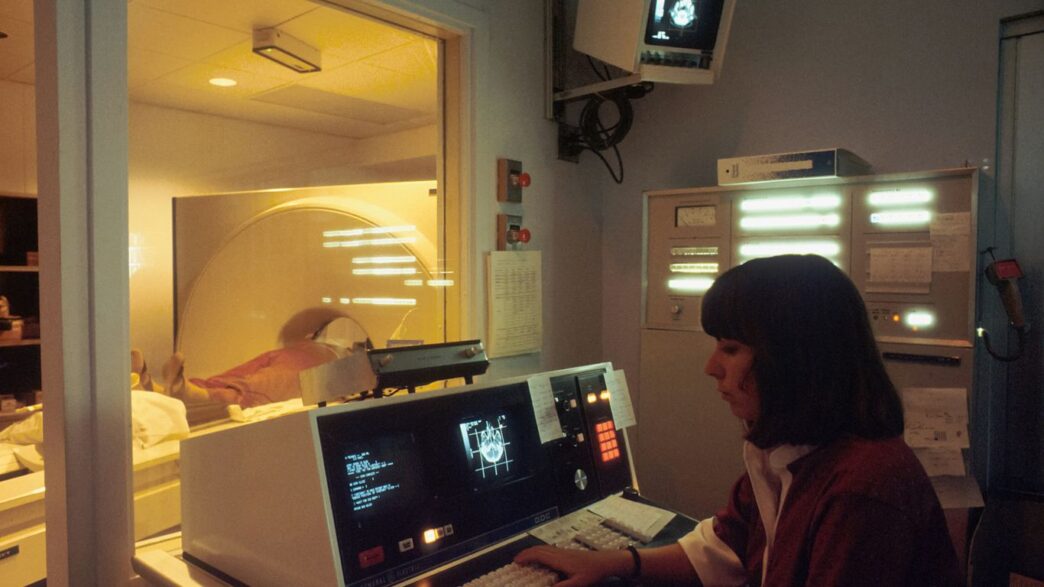

When we talk about AI in healthcare, one of the biggest headaches is how we handle all the data. AI systems, especially the clever ones, need mountains of information to learn and get better. This means we’re collecting and storing more sensitive patient details than ever before. This sheer volume of data makes it a prime target for cybercriminals.

Vulnerability to Cyberattacks and Data Breaches

Think about it: medical records are incredibly personal and valuable. It’s no surprise that hackers are always looking for ways to get their hands on them. A data breach in healthcare isn’t just about stolen credit card numbers; it can expose intimate health details, leading to all sorts of problems for patients, from identity theft to discrimination. AI systems, with their vast data stores, become even more attractive targets. We’ve seen instances where patient data has been shared without explicit consent, sometimes even when people didn’t realise their information was being used for AI development. It’s a tricky balance, as we need data to improve AI, but we also have to protect patient privacy rigorously.

Complexities in Data Access and Sharing

Getting the right data to train AI is another hurdle. Healthcare data is often scattered across different hospitals, clinics, and IT systems, making it fragmented and difficult to access. Even within a single organisation, there can be resistance to sharing data, especially when it comes to sensitive patient information. This reluctance is understandable, but it can slow down AI development and limit its potential. Ideally, AI systems would keep learning as more data becomes available, but organisational barriers can make this ongoing improvement difficult. While cloud computing offers some solutions for storing large datasets, the fundamental issue of accessing and sharing this information remains a significant challenge.

Ensuring Patient Privacy in Large Datasets

Protecting patient privacy when dealing with massive datasets is a constant concern. Regulations like GDPR in Europe and similar laws elsewhere aim to provide a framework for how personal data can be collected, used, and shared. However, these regulations, while necessary, can sometimes make it harder to collaborate and share data across different countries or institutions, potentially limiting the data available for training AI. We need robust security measures, like better encryption and methods that allow AI models to learn without directly accessing raw patient data, to keep innovation moving forward without compromising privacy.

The sheer amount of data required for AI in healthcare presents a double-edged sword. While it fuels innovation and improved diagnostics, it simultaneously creates significant vulnerabilities. Safeguarding this sensitive information against breaches and misuse, while also facilitating necessary access for research and development, requires a careful and evolving approach to data governance and security protocols.

Accountability and Transparency in AI Decision-Making

When AI starts making decisions that affect patient care, figuring out who’s responsible when things go wrong becomes a real headache. It’s not like a human doctor you can have a chat with; AI can be a bit of a mystery box.

The ‘Black Box’ Problem and Unclear Responsibility

Lots of AI systems, especially the complex ones, work in ways that are hard for even their creators to fully explain. This is often called the ‘black box’ problem. We see the input and the output, but the steps in between are murky. In healthcare, this is a big issue. If an AI suggests a treatment that turns out to be wrong, or misses a diagnosis, who takes the blame? Is it the doctor who followed the AI’s advice, the company that made the AI, or the hospital that implemented it? It’s not always clear, and this lack of clarity can make it tough to learn from mistakes and improve patient safety.

Ensuring Documentation Accuracy with AI Scribes

AI is being used more and more to help with medical notes, acting like a super-fast scribe. This can save doctors a lot of time, letting them focus more on patients. However, these AI scribes aren’t perfect. They can sometimes misunderstand what’s being said, invent details (hallucinate), or miss important information. It’s vital that clinicians carefully review and verify everything an AI scribe writes down. If the documentation isn’t accurate, it can lead to all sorts of problems down the line, from incorrect billing to flawed treatment plans based on bad records.

Establishing Accountability for AI-Related Errors

So, how do we actually put accountability in place? It’s a tricky puzzle. We need systems that can track how AI is used and what decisions are made. This means having clear policies and procedures.

Here are a few things that could help:

- Clear Policies: Hospitals and clinics need written rules about how AI tools should be used, checked, and updated. These should cover who is responsible for what.

- Auditing Trails: Keeping records of AI suggestions and the final decisions made by humans. This helps show the thought process and where any errors might have crept in.

- Staff Training: Making sure doctors and nurses understand the AI tools they’re using, including their limitations, and know how to spot potential mistakes.

The challenge isn’t just about finding fault when something goes wrong. It’s about building trust. Patients need to feel confident that AI is being used safely and responsibly in their care. Without clear lines of responsibility and a transparent process for handling errors, that trust can easily be broken.

Implementation Hurdles and Financial Considerations

Getting AI into hospitals and clinics isn’t as simple as just buying the software. There are some pretty big roadblocks, both in terms of how much it costs and how it actually fits into the day-to-day running of things.

High Costs of AI Adoption and Maintenance

Let’s be honest, AI isn’t cheap. The initial outlay for sophisticated AI systems can be enormous, and that’s just the start. You’ve then got ongoing costs for keeping the systems updated, paying for the specialised staff needed to manage them, and the sheer amount of computing power required. For smaller practices or hospitals with tighter budgets, this can be a real stretch.

- Initial purchase and licensing fees.

- Hardware upgrades to support AI processing.

- Ongoing software maintenance and updates.

- Training for staff to use and manage the new systems.

- Energy consumption for running powerful servers.

The price tag for cutting-edge AI can be a significant barrier, especially for organisations that aren’t flush with cash.

Barriers to Integration with Existing Health IT

Most healthcare places already have a whole bunch of computer systems in place – electronic health records, scheduling software, you name it. Getting new AI tools to talk nicely with these older systems can be a massive headache. They often don’t speak the same ‘language’, leading to clunky workarounds or, worse, systems that just don’t work together properly. This lack of smooth integration can slow down doctors and nurses, which is the last thing anyone wants.

Trying to force new AI technology into old IT infrastructure can feel like trying to fit a square peg into a round hole. It often requires customisation that adds to the cost and complexity, and sometimes, it just doesn’t work as intended, leading to frustration and inefficiency.

Disparities in Access for Healthcare Providers

Because of the high costs and technical challenges, there’s a real risk that only the biggest, wealthiest healthcare organisations will be able to afford and implement advanced AI. This could create a two-tier system, where patients at well-funded hospitals get the benefits of AI-driven care, while those at smaller or less well-off facilities are left behind. It’s a bit like the digital divide, but for healthcare technology.

Regulatory and Validation Obstacles

Getting AI tools approved and making sure they actually work as intended is proving to be a bit of a headache. The rules just haven’t caught up with the technology yet, and that’s causing all sorts of problems.

Lack of Standardised Regulatory Guidelines

Right now, there aren’t many clear, agreed-upon rules for how AI should be checked and approved in healthcare. Different countries and even different bodies within the same country are still figuring this out. This makes it hard for developers to know what they need to do to get their products out there, and it means patients might be exposed to tools that haven’t been properly vetted. It’s a bit of a free-for-all, and that’s not ideal when people’s health is on the line.

Challenges with Autolearning Algorithm Oversight

One of the really clever things about some AI is that it can learn and adapt as it goes. But this is also a big regulatory hurdle. If an AI system is constantly changing based on new data, how do you keep track of its safety and effectiveness? The current system often requires a ‘frozen’ version of the AI to be validated. This means that if the AI learns something new and important after it’s been approved, it might need to go through the whole validation process again before it can use that new knowledge. This can slow down progress and make it difficult to get the most out of these dynamic systems.

Need for Empirical Clinical Trial Data

Just like any new drug or medical device, AI tools need to be proven safe and effective through rigorous testing. This usually means clinical trials. However, designing and running these trials for AI is complex. We need to figure out how to collect the right kind of data, how to measure the AI’s performance accurately, and how to compare it fairly against existing methods. The sheer volume and complexity of data required for AI validation often outstrips traditional trial methodologies. Without solid, real-world evidence from these trials, it’s hard for regulators to give the green light, and for doctors to trust the AI’s recommendations.

The pace of AI development is so fast that regulatory frameworks struggle to keep up. This gap creates uncertainty for developers and potential risks for patients. Establishing clear pathways for ongoing monitoring and re-validation of adaptive AI systems is a significant challenge that needs urgent attention.

Over-Reliance and Skill Degradation Concerns

It’s easy to get excited about AI in healthcare, thinking it’ll solve all our problems. But what happens when we start leaning on it a bit too much? There’s a real worry that doctors and nurses might start to lose some of their own sharp thinking skills. If the computer is always suggesting the next step, why bother thinking it through yourself? This could lead to a situation where, if the AI makes a mistake or isn’t available, the human staff are less prepared to handle things.

Diminished Critical Thinking in Clinicians

When AI tools become the go-to for diagnosis or treatment plans, there’s a risk that clinicians might stop questioning the outputs as rigorously. They might accept the AI’s suggestion without the deep thought process that used to be standard. This isn’t about blaming anyone; it’s just a natural human tendency to take the easier route. Over time, this can lead to a gradual dulling of the analytical skills that are so vital in medicine.

Potential for Reduced Human Judgment

Medical decisions often involve more than just data. There’s intuition, experience, and a gut feeling that comes from years of practice. If AI takes over too much of the decision-making, these human elements could be sidelined. The nuanced understanding of a patient’s situation, which often goes beyond what can be quantified, might be overlooked. This could mean that even if an AI’s recommendation is technically correct based on the data, it might not be the best course of action for that specific individual.

Managing Expectations and Public Skepticism

People are often told AI is going to be revolutionary, which sets a very high bar. When AI systems inevitably fall short or make errors, it can lead to disappointment and a loss of trust. This skepticism isn’t just from patients; healthcare professionals can also become wary if they’ve seen AI fail or if they feel it’s being pushed too hard without proper evidence of its benefits. It’s important to be realistic about what AI can do right now and to communicate that clearly.

- AI as a Tool, Not a Replacement: Emphasise that AI is there to assist, not to take over the core responsibilities of healthcare professionals.

- Continuous Training and Education: Ensure that staff continue to receive training in core clinical skills, even as AI tools become more common.

- Independent Verification: Encourage clinicians to independently verify AI-generated recommendations, especially for critical decisions.

- Open Dialogue: Maintain open conversations about the limitations and potential pitfalls of AI within healthcare settings.

The drive to adopt AI in healthcare is strong, but we must proceed with caution. The goal should be to augment human capabilities, not to replace the essential human element of care. If we become too dependent, we risk losing the very skills that make healthcare professionals so effective.

Looking Ahead: Balancing AI’s Promise with Its Pitfalls

So, where does this leave us with AI in healthcare? It’s clear that this technology isn’t a magic bullet. While it promises to make things more efficient and perhaps even more accurate in some areas, we can’t just ignore the downsides. We’ve talked about the real worries concerning patient data security, the risk of bias creeping into diagnoses, and the sheer cost of getting these systems up and running. Plus, there’s the human element – the need for empathy and the worry that we might rely too much on machines, forgetting our own judgment. It’s not about stopping AI, but about being smart about it. We need to keep a close eye on how it’s used, make sure it’s fair, and remember that technology should support, not replace, the vital human connection in healthcare. It’s a balancing act, and getting it right will take careful thought and ongoing effort from everyone involved.

Frequently Asked Questions

Can AI make healthcare unfair?

Yes, AI can sometimes be unfair. If the information used to train AI isn’t balanced, it might make worse decisions for certain groups of people, like those from different backgrounds or ethnicities. This could lead to some people not getting the best care.

Will AI replace doctors and nurses?

It’s unlikely that AI will completely replace doctors and nurses. AI can help with tasks like analysing scans or managing records, freeing up healthcare professionals to spend more time with patients. However, the human touch, empathy, and complex decision-making skills of medical staff are still very important and can’t be replicated by AI.

Is my health information safe with AI?

Keeping your health information safe is a big concern. AI systems need lots of data, which can be a target for hackers. If these systems aren’t properly protected, your private medical details could be exposed or misused. Strong security measures are crucial.

Who is responsible if an AI makes a mistake?

Figuring out who’s to blame when an AI makes an error can be tricky. Because AI systems can be complex and their decision-making isn’t always easy to understand (like a ‘black box’), it’s hard to pinpoint responsibility. This is an area that needs clearer rules and oversight.

Is AI in healthcare very expensive?

Bringing AI into hospitals and clinics can cost a lot of money. Not only is the technology itself pricey, but it also needs to be maintained and updated. This high cost can make it harder for smaller clinics or hospitals in less wealthy areas to use AI, potentially creating a gap in care.

Do we have enough proof that AI actually works well in medicine?

While AI shows promise, there’s a need for more solid proof from real-world medical studies. Many AI tools are tested in labs or for business purposes, but we need more evidence showing they truly improve patient outcomes in everyday healthcare. This lack of proof can slow down its adoption.