Artificial intelligence is changing how we do things, and it’s important to know how to manage it right. The IAPP has a lot of good info to help people get a handle on iapp ai governance. Whether you’re just starting out or looking to get more advanced, they’ve got resources that break down the complex stuff into more manageable pieces. This guide will walk you through some of the key areas you’ll want to focus on.

Key Takeaways

- The IAPP offers resources to help understand AI basics, like what AI and machine learning really are, and the different kinds of AI systems out there.

- It’s important to know the risks that come with AI and what makes an AI system trustworthy and ethical.

- The IAPP provides guidance on how to manage AI projects from start to finish, including testing and what to do after the system is in place.

- You can find practical advice on setting up an AI governance program and using GRC strategies to make it work well.

- The IAPP covers major AI frameworks, global laws, and how to connect current regulations like GDPR and the EU AI Act to your iapp ai governance efforts.

Understanding the Foundations of AI Governance

Before we can really get a handle on AI governance, we need to know what we’re talking about. It’s not just about the fancy algorithms; it’s about how these systems work and what they actually do.

Defining Artificial Intelligence and Machine Learning

Think of Artificial Intelligence (AI) as the big idea – making machines that can do things that usually require human smarts, like learning, problem-solving, or making decisions. Machine Learning (ML) is a big part of AI. It’s how we teach computers to learn from data without being programmed for every single step. Instead of writing explicit instructions for every possible scenario, we give the ML model a bunch of data and let it figure out the patterns itself. This ability to learn and adapt is what makes AI so powerful, but also why governance is so important.

Exploring AI System Types and Use Cases

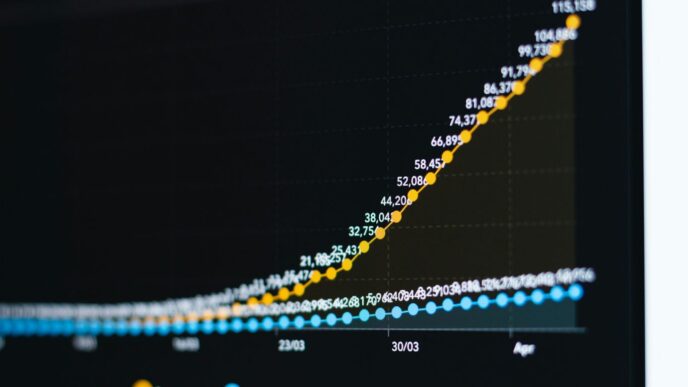

AI isn’t just one thing. We’ve got different types, and they pop up everywhere. You’ve got systems that are good at specific tasks, like recognizing faces in photos or recommending what movie to watch next. Then there are more advanced systems that can handle a wider range of problems. The ways we use them are growing fast. Think about customer service chatbots, tools that help doctors diagnose illnesses, or systems that manage traffic flow in cities. Each use case comes with its own set of considerations.

AI Models in Socio-Cultural Context

It’s easy to get caught up in the technical side, but AI doesn’t exist in a vacuum. The data we feed into AI models often reflects existing societal biases, whether we mean for it to or not. This means AI systems can sometimes perpetuate or even amplify unfairness. For example, a hiring tool trained on historical data might unfairly favor certain groups if past hiring practices were biased. Understanding this connection between AI and society is key to building systems that are fair and beneficial for everyone. We need to think about how these tools impact people and communities.

Ethical AI and Responsible Development Principles

When we talk about AI, it’s not just about making cool tech. We also have to think about the right way to build and use it. This means looking at the potential problems AI can cause and figuring out how to avoid them. It’s about making sure AI systems are fair, safe, and don’t cause harm.

Identifying Core Risks and Harms of AI Systems

AI isn’t perfect, and sometimes it can go wrong in ways that really affect people. Think about bias, for example. If the data used to train an AI is skewed, the AI can end up making unfair decisions, like discriminating against certain groups in hiring or loan applications. Then there’s the issue of privacy. AI systems often need a lot of data, and how that data is collected and used is a big concern. We also need to worry about AI making mistakes that could have serious consequences, especially in areas like healthcare or self-driving cars. And let’s not forget about security – AI systems can be targets for attacks, leading to data breaches or misuse.

Characteristics of Trustworthy AI

So, what makes an AI system something we can rely on? Well, a few things come to mind. First, it needs to be fair. That means it shouldn’t treat different people or groups unfairly. Second, it should be reliable and safe. We want AI that works as expected and doesn’t cause accidents or errors. Transparency is also key; we should be able to understand, at least to some degree, how an AI makes its decisions. Accountability matters too – if something goes wrong, we need to know who is responsible. Finally, AI should respect privacy and be secure, protecting the data it handles.

Essential Principles for Ethical AI Use

To make sure we’re using AI the right way, there are some guiding principles we should all follow. These aren’t just nice ideas; they’re practical steps to keep AI development on the right track.

- Fairness: Design AI systems to avoid bias and discrimination. Regularly check for and correct any unfair outcomes.

- Transparency and Explainability: Make AI decision-making processes as clear as possible. Document how systems work and why they make certain choices.

- Accountability: Establish clear lines of responsibility for AI systems. Have mechanisms in place to address issues when they arise.

- Privacy and Security: Protect user data rigorously. Implement strong security measures to prevent unauthorized access or misuse.

- Human Oversight: Keep humans in the loop, especially for critical decisions. AI should assist, not replace, human judgment in sensitive areas.

Navigating the AI Development Lifecycle

Building AI systems isn’t just about writing code and training models; it’s a whole process that needs careful thought from start to finish. Think of it like building a house – you wouldn’t just start hammering nails without a plan, right? The same goes for AI. You’ve got to map out what you’re trying to achieve, build it carefully, and then keep an eye on it after it’s "finished."

Mapping and Scoping AI Projects

Before you even think about data or algorithms, you need to get clear on the project’s goals. What problem are you trying to solve with AI? Who will use it, and how? It’s about defining the boundaries and making sure everyone involved is on the same page. This stage involves:

- Defining clear objectives: What does success look like for this AI project?

- Identifying stakeholders: Who needs to be involved or informed?

- Assessing data requirements: What data do you need, and is it available and suitable?

- Considering potential impacts: What could go wrong, and how might it affect people or the business?

Getting this part right sets a solid foundation. A good way to start is by using an AI project intake workflow checklist to guide your initial assessments.

Testing and Validating AI Systems

Once you’ve got a prototype or a working model, the real testing begins. This isn’t just about checking if the code runs; it’s about seeing if the AI behaves as expected in different situations. You need to test for accuracy, fairness, and robustness. Does it make biased decisions? Can it be easily tricked? This phase often involves:

- Performance testing: How well does it perform against benchmarks?

- Bias detection: Are there unfair outcomes for certain groups?

- Security testing: Can the system be compromised?

- User acceptance testing: Do the intended users find it effective and easy to use?

This stage is critical for catching issues before they cause real-world problems.

Managing AI Systems Post-Deployment

The work doesn’t stop once the AI is out in the wild. AI models can change over time as they encounter new data, a phenomenon known as model drift. Continuous monitoring is key. You need to keep track of performance, check for unexpected behavior, and have a plan for updates or even decommissioning if necessary. This includes:

- Performance monitoring: Regularly checking accuracy and other key metrics.

- Drift detection: Identifying when the model’s performance degrades.

- Feedback loops: Collecting user feedback to identify areas for improvement.

- Retraining and updating: Periodically updating the model with new data or improved algorithms.

Implementing responsible AI governance best practices throughout this lifecycle helps manage risks and build trust in your AI initiatives.

Implementing Robust AI Governance Strategies

Setting up a solid plan for AI governance isn’t just a good idea; it’s becoming a necessity. Think of it like building a house – you need a blueprint and a solid foundation before you start putting up walls. This section is all about getting that structure in place.

Practical Steps for Establishing AI Governance

Getting started with AI governance can feel like a big task, but breaking it down makes it manageable. Here are some initial steps to consider:

- Inventory Your AI Systems: First, you need to know what AI you’re actually using or planning to use. This means cataloging all AI tools, models, and data sources across the organization. Don’t forget about third-party tools that might be integrated.

- Define Roles and Responsibilities: Who’s in charge of what? Clearly assign ownership for AI governance. This could involve setting up an AI governance committee or designating specific individuals within different departments.

- Assess Current Risks: Take a good look at the potential problems your AI systems could cause. This includes things like bias in decision-making, data privacy issues, security vulnerabilities, and compliance gaps. Understanding these risks is key to managing them.

- Develop Policies and Guidelines: Based on your risk assessment, create clear rules for how AI should be developed, deployed, and used. These policies should cover ethical considerations, data handling, transparency, and accountability.

Developing an AI Governance Program

An AI governance program is more than just a set of rules; it’s an ongoing process. It needs to be integrated into how your organization operates. A good program will help you manage AI responsibly and adapt as the technology changes.

Here’s a look at what goes into building one:

- Cross-Functional Collaboration: AI touches many parts of a business – IT, legal, compliance, product development, and even marketing. Your governance program needs input and buy-in from all these areas. Think about setting up regular meetings or a dedicated AI governance team that includes representatives from each department.

- Lifecycle Management: AI systems aren’t static. They are developed, tested, deployed, and often updated. Your program should address governance at each stage of this lifecycle. This means having checks and balances in place from the initial design phase all the way through to retirement of the system.

- Continuous Monitoring and Improvement: The AI landscape is always shifting, and so are the regulations. Your governance program needs to be flexible. Regularly review your policies, assess the effectiveness of your controls, and update your program based on new risks, technologies, or legal requirements. This isn’t a ‘set it and forget it’ kind of thing.

GRC Strategies for Effective AI Governance

When we talk about Governance, Risk, and Compliance (GRC) in the context of AI, we’re looking at how these three areas work together to keep your AI initiatives on track and out of trouble. It’s about making sure you’re doing the right things, in the right way, and can prove it.

- Integrating AI into Existing GRC Frameworks: Many organizations already have GRC processes in place for other areas. The goal is to adapt these existing frameworks to include AI, rather than starting from scratch. This can make the process smoother and more efficient.

- Risk Assessment Tools and Methodologies: You’ll need specific ways to identify and measure the risks associated with AI. This might involve specialized risk assessment templates or software designed to evaluate AI models for bias, fairness, and security vulnerabilities. The key is to have a structured approach to understanding potential downsides.

- Compliance Monitoring and Reporting: Keeping up with AI regulations is a challenge. Your GRC strategy should include mechanisms for monitoring compliance with relevant laws and internal policies. This means having clear reporting lines and regular audits to confirm that AI systems are being used as intended and within legal boundaries.

Key Frameworks and Standards for AI Governance

So, you’ve got your AI systems humming along, but how do you make sure they’re playing by the rules? That’s where frameworks and standards come in. Think of them as the instruction manuals and safety guidelines for your AI. Without them, things can get messy, fast.

The ISO 42001 Framework for Ethical AI

This is a big one. ISO 42001 is an international standard specifically designed for AI management systems. It’s all about setting up a system to manage AI risks and make sure your AI is used responsibly. It covers things like:

- Risk management: Identifying and dealing with potential problems your AI might cause.

- Transparency: Making sure people understand how the AI works and why it makes certain decisions.

- Continuous improvement: Always looking for ways to make your AI better and safer.

It’s basically a roadmap for building trustworthy AI. Getting certified in ISO 42001 shows you’re serious about ethical AI practices.

Global AI Governance Laws and Policies

This is where things get a bit more complicated because laws are changing all the time, and they differ from place to place. You’ve got different countries and regions coming up with their own rules for AI. For example, there are reports detailing AI governance laws and policies across places like Singapore, Canada, the UK, the US, and the EU. It’s a lot to keep track of, and staying updated is key. You can’t just assume what’s okay in one country is okay in another.

Leveraging IAPP Resources for AI Governance

This is where the International Association of Privacy Professionals (IAPP) really shines. They have a ton of resources that can help you figure all this out. They offer things like:

- eBooks and White Papers: These go deep into topics like why traditional risk frameworks don’t quite cut it for AI and how to actually build an AI governance program from the ground up. They even have guides on AI inventory essentials.

- Infographics and Reports: Great for getting a quick overview or understanding the bigger picture, like GRC strategies for AI governance or global regulatory landscapes.

- Webinars: You can catch on-demand sessions that discuss everything from embedding trust into AI development to specific laws like Colorado’s AI bill. They even host discussions with industry experts.

Basically, if you’re trying to get a handle on AI governance, the IAPP is a good place to start looking for practical advice and information. They’ve got materials that can help you get started, manage risks, and stay compliant in this fast-moving area.

Addressing Current AI Laws and Emerging Regulations

It feels like every week there’s a new law or regulation popping up about AI. It can be a real headache trying to keep track of it all, especially when you’re just trying to build and use AI responsibly. We’ve got existing laws that touch on AI, and then there are these brand-new, AI-specific ones that are really changing the game.

Existing Laws Governing AI Use

Before we even get to the AI-specific stuff, a lot of existing privacy and data protection laws already apply. Think about laws like the GDPR in Europe. It’s not an AI law, but it has a huge impact on how you collect, process, and store data used for AI training and deployment. You need to be mindful of consent, data minimization, and the rights of individuals when building AI systems. Other laws related to consumer protection, anti-discrimination, and even intellectual property can also come into play, depending on what your AI is doing.

Key GDPR Intersections with AI Laws

The General Data Protection Regulation (GDPR) is a big one. It sets strict rules for processing personal data. When AI systems use personal data, all those GDPR principles still apply. This means you need a lawful basis for processing, you have to be transparent about how data is used (especially for automated decision-making), and individuals have rights like the right to access and erasure. Many new AI regulations are being built with these data protection concepts in mind, so understanding GDPR is a good starting point.

Navigating the EU AI Act

The EU AI Act is probably the most talked-about piece of AI legislation right now. It takes a risk-based approach, categorizing AI systems based on how much harm they could potentially cause.

- Unacceptable Risk: Systems that are banned outright, like social scoring by governments.

- High-Risk: Systems that require strict conformity assessments before they can be put on the market. This includes AI in critical infrastructure, education, employment, and law enforcement.

- Limited Risk: Systems with specific transparency obligations, like chatbots that need to disclose they are AI.

- Minimal Risk: Most AI systems fall here, with no specific obligations.

Getting ready for this means understanding where your AI systems fit in this classification and what obligations you’ll have. It’s a complex piece of legislation, and companies are spending a lot of time figuring out compliance.

Understanding North American AI Regulations

In North America, things are a bit more fragmented. Canada has its own proposed AI and Data Act, which is looking at risk management and transparency. In the United States, there isn’t one single federal AI law yet, but various agencies are issuing guidance and taking enforcement actions based on existing laws. States are also stepping in; for example, Colorado has passed legislation that regulates AI systems, focusing on consumer protection. It’s a patchwork, and you really need to keep an eye on both federal agency actions and state-level developments.

Building an AI-Ready Governance Stack

So, you’ve got your AI systems humming along, but how do you keep them in check? Building an AI-ready governance stack isn’t just about ticking boxes; it’s about creating a system that works with your AI, not against it. Think of it like building a sturdy house – you need a solid foundation and all the right connections for everything to function smoothly. This means integrating your governance, risk, and compliance (GRC) efforts directly into your development workflows.

Architecting Enterprise-Wide AI Governance

Getting everyone on the same page is the first big hurdle. You can’t have one department doing its own thing with AI while another is trying to follow strict rules. It needs to be an organization-wide effort. This involves setting clear policies that apply everywhere, from the data scientists in R&D to the marketing team using AI-powered tools. It’s about making sure everyone understands the rules of the road for AI.

Here are some key steps to get this architecture in place:

- Define Clear Roles and Responsibilities: Who is accountable for what when it comes to AI? This needs to be crystal clear.

- Establish a Central AI Governance Committee: A dedicated group can oversee policies, review high-risk AI projects, and act as a point of contact.

- Develop an AI Inventory: You need to know what AI systems you have, where they are, and what they do. This is a big job, but it’s necessary.

Aligning Compliance, Risk, and Development Workflows

This is where the real magic happens, or where things can get really messy if not done right. Traditionally, compliance and development teams might operate in separate silos. With AI, that just doesn’t work. You need these teams talking to each other constantly. When a new AI model is being built, the compliance folks need to be aware of potential risks early on, and the development team needs to understand the compliance requirements from the start. It’s about building governance into the process, not just checking it at the end. For instance, integrating your Identity Provider like Entra ID or Okta can link every AI agent action to a verified user identity, ensuring accountability and traceability.

The Future of AI-Ready Governance

What does the future look like? Well, it’s all about making governance more automated and proactive. Instead of manual reviews, imagine systems that can automatically flag potential compliance issues in code or monitor AI models for drift and bias in real-time. This requires a flexible and adaptable governance stack. We’re moving towards a model where governance is a continuous process, woven into the fabric of AI development and deployment, rather than an afterthought. This proactive approach is key to staying ahead of the curve and managing AI responsibly as it continues to evolve.

Moving Forward with AI Governance

So, we’ve talked a lot about AI and how it’s changing things. It’s pretty clear that just letting AI run wild isn’t a good idea. That’s where AI governance comes in, and honestly, it’s not as scary as it sounds. The IAPP has a bunch of resources, like their AIGP certification and all sorts of guides and webinars, that can really help you get a handle on this. Think of it as getting the right tools and know-how to build something safely. By using what the IAPP provides, you can start putting together a plan to manage AI in your organization, making sure it’s used the right way and doesn’t cause problems down the line. It’s about being smart and prepared for what’s next.

Frequently Asked Questions

What exactly is AI governance and why is it important?

AI governance is like setting the rules for how we use smart computer programs, called AI. It’s super important because AI can do amazing things, but we need to make sure it’s used safely and fairly. Think of it as making sure AI doesn’t cause problems and helps us in good ways.

What are some common problems that can happen with AI?

Sometimes AI can make mistakes, or it might not be fair to everyone. For example, an AI used for hiring might accidentally favor certain groups of people. Other issues can include AI not being clear about how it makes decisions, or even being used for harmful purposes. That’s why we need rules to prevent these things.

How can we make sure AI is trustworthy?

To trust AI, we need it to be reliable, safe, and fair. It should also be clear about how it works, and people should be able to understand its decisions. Plus, we need to be able to fix it if something goes wrong. These are key ideas for building AI we can count on.

What’s the difference between AI and machine learning?

Think of Artificial Intelligence (AI) as the big idea of making computers smart, like humans. Machine learning (ML) is one way to achieve AI. It’s like teaching a computer to learn from examples without being told exactly what to do every single time. So, ML is a part of AI.

Are there special rules for using AI in different countries?

Yes, absolutely! Different countries and regions are creating their own laws and guidelines for AI. For example, Europe has the EU AI Act, and North America has various rules too. It’s important to know these rules because they can affect how AI is used and developed.

How can I learn more about managing AI responsibly?

There are many great resources out there! Organizations like the IAPP offer training and guides on AI governance, helping you understand the rules and best practices. You can also find webinars, white papers, and articles that explain how to build and use AI in a safe and ethical way.