Welcome to the future of IoT where everything from our homes to our cars is connected. However, as this network expands exponentially, so does the need for enhanced connectivity and speed. That’s where edge computing steps in – a game-changing technology that ensures seamless communication between devices while keeping your data safe and secure. In this blog post, we will explore how edge computing is revolutionizing the IoT industry by eliminating latency issues, reducing reliance on cloud services, and ultimately securing the future of interconnected devices. So buckle up as we dive into the world of edge computing and discover how it’s unlocking a whole new level of efficiency and reliability in IoT.

What is Edge Computing?

As the world becomes increasingly interconnected, the need for faster and more reliable data processing has never been greater. Edge computing is a distributed computing paradigm that brings computation and data storage closer to the devices and sensors that generate and collect data.

By moving data processing and analysis closer to the edge of the network, edge computing can enable real-time decisions, improve responsiveness, and reduce latency. Edge computing can also help to improve security and privacy by reducing the amount of sensitive data that needs to be transmitted over the network.

Benefits of Edge Computing for IoT

As the number of devices connected to the internet continues to grow, so does the need for faster and more reliable connectivity. Edge computing is a solution that can help to improve the speed and reliability of IoT devices by processing data closer to the source.

There are many benefits that can be enjoyed by using edge computing for IoT, including:

Reduced Latency: By processing data closer to the source, there is less latency involved in sending and receiving information. This can be critical for applications where real-time data is required, such as in autonomous vehicles or industrial control systems.

Improved Connectivity: By distributing processing power across multiple locations, edge computing can help to improve connectivity and reduce dependence on a single point of failure. This can make systems more resilient to network disruptions and outages.

Increased Speed: Edge computing can help to improve the speed of data processing by reducing the need to send information back and forth between different locations. This can be especially beneficial for applications that require low-latency or high-throughput data processing.

Improved Efficiency: By processing data locally, edge computing can help to save on bandwidth and energy consumption. This can lead to reduced costs and increased efficiency for both organizations and individuals.

How Edge Computing Enhances Connectivity and Speed

IoT devices are becoming increasingly commonplace, with more and more businesses and individuals relying on them to automate tasks and monitor their surroundings. However, as IoT devices become more widespread, the infrastructure needed to support them must also evolve to meet the demand. This is where edge computing comes in.

Edge computing is a distributed computing model that brings compute resources closer to the data source, be it an IoT device or sensor. By bringing these resources closer to the data source, edge computing can reduce network latency and improve response times. This is crucial for real-time applications such as video streaming and gaming, where even a slight delay can result in a poor user experience. In addition, by moving computation away from centralized data centers, edge computing can help to reduce bandwidth costs and improve overall system performance.

So how does this all translate into enhanced connectivity and speed for IoT devices? Well, firstly, as mentioned above, edge computing can help to reduce network latency by moving compute resources closer to the data source. This can lead to faster response times for IoT devices, which is Crucial for real-time applications that require low latency. Secondly, edge computing can also help to improve system performance by distributing load across multiple servers. This can free up bandwidth and processor resources, leading to quicker response times for IoT devices

Emerging Technologies in Edge Computing and IoT

Emerging technologies in edge computing and IoT are revolutionizing the way we interact with the physical world. By bringing computing closer to the data sources and devices, we can reduce latency, increase speeds, and improve security. The benefits of edge computing are already being realized in a number of industries, from retail to healthcare to manufacturing. And as IoT devices become more widespread, the need for efficient and secure edge solutions will only grow.

Cloud vs. Edge Computing in IoT:

The debate between cloud and edge computing in the context of the internet of things (IoT) is one that has been ongoing for some time now. There are pros and cons to both approaches, and ultimately it comes down to what best suits the needs of a given organization or application. In this article, we’ll take a closer look at both cloud and edge computing in IoT, compare and contrast their respective strengths and weaknesses, and explore some real-world examples to see how each is being used today.

At its core, IoT is all about connecting devices to the internet so they can share data and be controlled remotely. The first step in doing this is to install sensors on the devices themselves, which can then collect data about their surroundings or user interactions. This data is then transmitted wirelessly to an internet-connected platform where it can be accessed, analyzed, and acted upon.

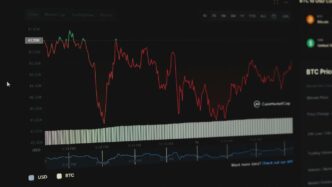

The question of whether this data should be processed in the cloud or at the edge has been a fiercely contested one among experts. The cloud offers obvious advantages in terms of scalability and storage capacity, while edge computing can provide faster response times and improved security by keeping sensitive data on-site. So which is the better option? Let’s take a closer look at each approach to find out.

Challenges Facing ML/AI in Edge Computing Environments

Due to the resource-intensive nature of machine learning and artificial intelligence, deploying these technologies to edge computing environments can present significant challenges. These include:

- Lack of compute power: Edge devices are often limited in terms of processing power and memory, which can make it difficult to run complex ML/AI algorithms.

- Limited connectivity: Edge devices are often located in remote or hard-to-reach areas, which can make it difficult to get reliable data connections.

- High costs: Deploying ML/AI on edge devices can be expensive, due to the need for specialized hardware and software.

- Security risks: Edge devices are particularly vulnerable to security breaches, due to their distributed nature and lack of physical security measures.

Conclusion

Edge computing is the future of the Internet of Things, with its promise to provide enhanced connectivity and speed across systems. By using distributed data processing power instead of a centralized model, edge networks can be optimized for maximum performance while providing improved security and privacy measures.

It may require some effort upfront in order to set up an effective edge network infrastructure, but it’s more than worth it for those who desire both speedy performance and secure storage on their connected devices. With the IoT continuing to expand each year, embracing advanced technologies such as edge computing will only become increasingly necessary in order to ensure a safe and reliable user experience going forward.