Understanding Autonomous Vehicle Cameras

So, how do these self-driving cars actually ‘see’ the world? It all comes down to cameras, and not just any old cameras. We’re talking about sophisticated systems that act like the vehicle’s eyes, helping it understand everything happening around it. These cameras are the primary way autonomous vehicles gather information to make driving decisions. Think of it like this: a human driver uses their eyes to see the road, other cars, pedestrians, and signs. Autonomous vehicles do the same, but with advanced technology.

The Role of Cameras in Autonomous Driving

Cameras are the workhorses for perception in self-driving cars. They capture visual data, which is then processed by the car’s computer brain. This data helps the car:

- Identify other vehicles and predict their movements.

- Spot pedestrians, cyclists, and other road users.

- Read traffic signs and understand traffic light signals.

- Stay within its lane and follow the road’s curvature.

- Map out the road ahead, including any changes in elevation or surface.

Without these cameras, the car would be essentially blind, unable to navigate safely or react to its environment.

2D vs. 3D Camera Systems

When we talk about cameras for autonomous driving, there are generally two main types, or a combination of them:

- 2D Cameras: These are like the cameras you might find on your phone or a regular digital camera. They capture flat images. While they’re great for recognizing objects, reading signs, and seeing colors, they don’t inherently tell the car how far away something is. They capture high-resolution images that are really useful for tasks like object detection and lane keeping.

- 3D Camera Systems: These systems go a step further by providing depth information. This can be achieved through various methods, like using two cameras spaced apart (stereo vision) to mimic human binocular vision, or using technologies like Time-of-Flight (ToF) sensors that measure how long it takes light to bounce back from objects. This 3D data is vital for accurately judging distances to obstacles and understanding the shape of the environment.

Many autonomous systems use a mix of both 2D and 3D sensing to get the most complete picture possible.

Key Camera Features for Smart Mobility

For cameras to work effectively in self-driving cars, they need some special features:

- Depth Perception: As mentioned, being able to tell how far away things are is a big deal. This capability allows the car to avoid collisions and maintain safe distances.

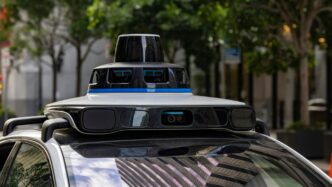

- Multi-Camera Support: To get a full 360-degree view and avoid blind spots, cars often have multiple cameras placed all around. This gives the system a complete understanding of its surroundings, like a bird’s-eye view.

- Long-Distance Transmission: The data from these cameras needs to be sent to the car’s computer quickly and reliably, even over long distances within the vehicle. Technologies like GMSL2 and FPD-Link III are often used for this, making sure the car gets real-time video feeds without delay. This is important for reacting to things happening far down the road.

Core Functions of Autonomous Vehicle Cameras

Autonomous vehicle cameras are the eyes of the car, constantly taking in information to help it understand what’s going on around it. They’re not just taking pretty pictures; they’re doing some really important jobs that keep the car moving safely and smartly.

Object Detection and Recognition

This is probably the most talked-about function. Cameras help the car spot things like other cars, pedestrians, cyclists, and even animals. They don’t just see them; they figure out what they are and how they’re moving. This is super important for avoiding crashes. Think about it: if the car can’t see a person stepping into the road, it can’t stop in time. The system analyzes the shapes, sizes, and movements of objects to identify them. This ability to distinguish between a parked car and a moving one is key to safe driving.

Lane Tracking and Road Mapping

Cameras are also responsible for keeping the car in its lane. They look for lane markings on the road – solid lines, dashed lines, even faded ones. By tracking these lines, the car knows where the edges of its lane are and can stay centered. Beyond just lanes, cameras help build a picture of the road’s layout. This includes understanding curves, changes in elevation, and where the road is going. This detailed map helps the car plan its path smoothly.

Traffic Sign and Signal Interpretation

Ever seen a stop sign or a speed limit sign? Cameras are trained to recognize these. They can read the text and symbols on traffic signs, understanding what the rules of the road are at any given moment. This also extends to traffic lights. The camera system can identify the color of the light, telling the car whether to stop, go, or proceed with caution. This interpretation is vital for obeying traffic laws and interacting safely with other road users.

Enhancing Safety with Autonomous Vehicle Cameras

Autonomous vehicle cameras are really the eyes of the car, and they do a lot more than just see. They’re key to making sure everyone stays safe on the road, whether it’s the people inside the car or those outside. Think about it – without good vision, how can a car possibly know what’s going on around it?

Pedestrian and Vehicle Detection

One of the biggest jobs for these cameras is spotting people and other cars. Using advanced computer vision, the car can identify pedestrians stepping off a curb or vehicles changing lanes. This isn’t just about seeing them; it’s about understanding their movement and predicting what they might do next. This allows the car to react in time, like slowing down or stopping, to avoid a collision. It’s like having a super-vigilant co-pilot who never gets distracted. The system needs to be really good at telling the difference between a shadow and a person, or a parked car and one that’s about to pull out.

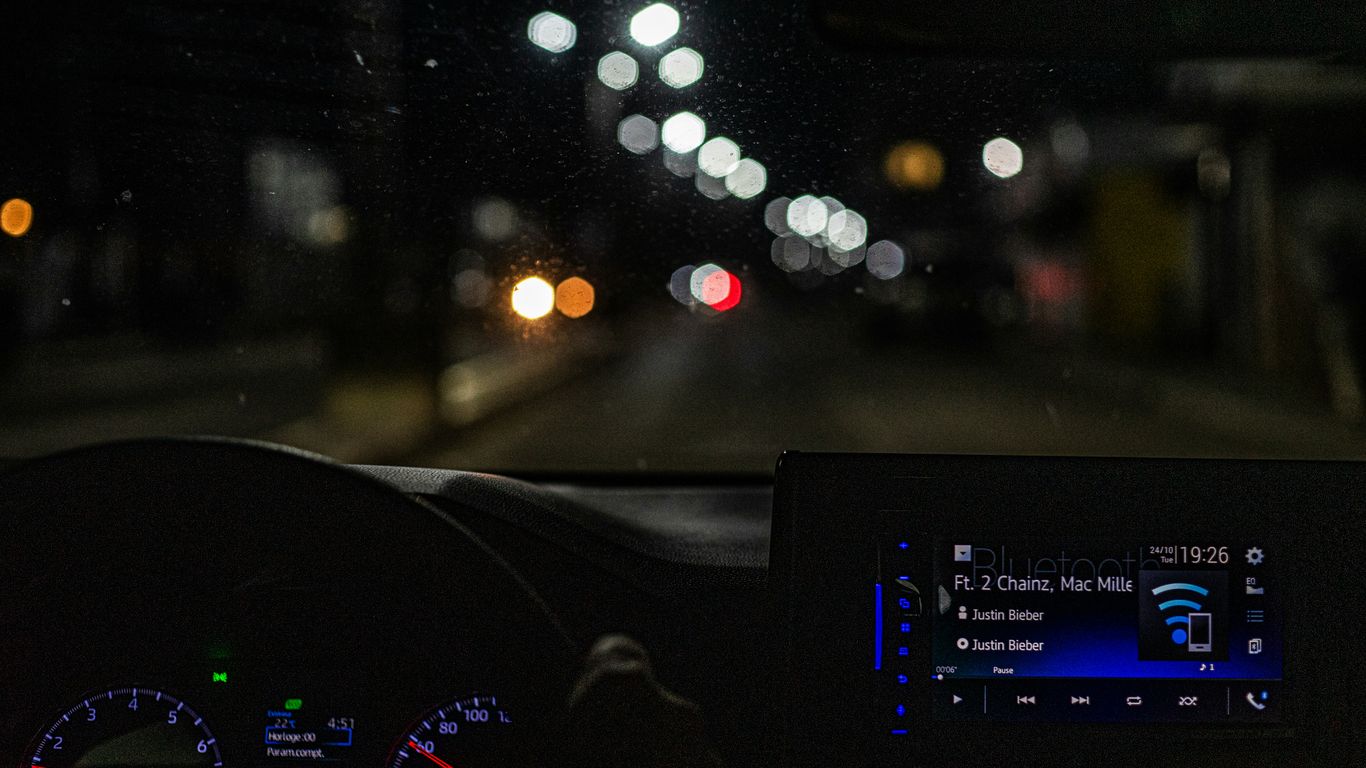

Low Visibility and Adverse Weather Performance

Driving isn’t always sunshine and clear roads, right? Cameras have to work even when it’s foggy, raining hard, or dark. New technologies are helping cameras see better in these tough conditions. Some systems use special types of cameras or combine data from different sensors to get a clearer picture. For example, thermal cameras can detect heat signatures, making it easier to spot people or animals at night or in fog. Radar and lidar also help fill in the gaps when camera vision is limited. The goal is to make sure the car can still ‘see’ and react safely, no matter the weather.

Smart Airbag Deployment Systems

Cameras also play a role in how safety systems like airbags work. When a crash is about to happen, the car’s cameras and sensors can help figure out how severe the impact will be. This information is sent to the car’s computer, which then decides the best way to deploy the airbags. For instance, it might decide to deploy airbags with different force levels depending on whether it’s a minor bump or a major collision, or even if a passenger is sitting in a specific seat. This smart deployment helps protect passengers more effectively during an accident.

Technological Advancements in Camera Systems

Autonomous vehicle cameras are getting pretty sophisticated these days, way beyond just taking a picture. We’re seeing some really cool tech that helps them see the world in new ways.

Depth Perception Technologies

One big leap is how cameras can now figure out how far away things are. Old cameras just saw flat images, which isn’t super helpful when you need to know if that car is right in front of you or a block away. New systems use things like stereo vision (basically two cameras working together like our eyes) or Time-of-Flight (ToF) sensors that bounce light off objects to measure distance. This 3D understanding is a game-changer for avoiding collisions and staying in the lane. It lets the car build a much better picture of its surroundings.

Multi-Camera Support and Coverage

Cars aren’t just using one camera anymore. They’re packing multiple cameras all around the vehicle. Think of it like giving the car eyes everywhere – front, back, sides, even looking up. This setup helps get rid of blind spots and gives the car a full 360-degree view. This is super useful for things like parking, where you need to see everything around you, or for spotting pedestrians who might pop out from unexpected places.

Here’s a quick look at how different camera setups can help:

- Single Camera: Good for basic tasks like reading lane lines or traffic signs.

- Stereo Camera Pair: Provides depth information, useful for judging distances to objects.

- Surround View System (Multiple Cameras): Creates a bird’s-eye view, ideal for low-speed maneuvers and detecting nearby obstacles.

Long-Distance Data Transmission

All these cameras are generating a ton of data, and it needs to get where it’s going quickly and reliably. Advanced systems are using special connections, like GMSL2 or FPD-Link III, that can send high-quality video signals over longer distances without losing quality. This is important because the car’s computer needs to get that visual information fast to make decisions, especially at higher speeds or when dealing with complex road situations. It’s like having a super-fast highway for all the visual information the car is collecting.

Challenges in Autonomous Vehicle Camera Perception

When it comes to the brains behind self-driving cars, the camera systems play a giant part, but they face all sorts of real-world hurdles every day. These tech headaches can seriously shape how well a car sees and reacts to the things around it. Here’s a closer look at the main problems that pop up:

Adapting to Lighting and Environmental Changes

Driving means moving through bright sun, dark tunnels, and everything in between. Camera sensors can struggle to spot objects accurately when light changes quickly or when there’s too much glare or shadow. Here’s what can throw them off:

- Nighttime driving, fog, or strong sunlight hitting the lens

- Reflections from wet roads or windows

- Objects blending into their backgrounds (think gray cars on rainy days)

Keeping cameras precise in all conditions is still a tall order for many carmakers. Sometimes, software can adjust exposure or filter out extra light, but that’s not always enough for extreme weather.

Ensuring Accuracy in Complex Scenarios

On a busy city street, there might be cyclists, pets, crosswalks, delivery vans, and construction signs—all crammed together. Cameras have to pick out each item and decide what’s important, super fast. Some problems that show up include:

- Obscured or partially visible objects, like a child darting out from behind a parked car.

- Unexpected movement and crowded intersections.

- Mistaking harmless things (like trash bags or shadows) for dangerous obstacles.

The pressure is on to balance sensitivity with the ability to ignore false alarms.

Minimizing False Detections

False detections happen when a camera system gets too jumpy or too cautious. A system that makes too many mistakes (either false positives or negatives) can ruin trust in autonomous cars. Here’s a snapshot of how these errors break down:

| Error Type | Example | Possible Result |

|---|---|---|

| False Positive | Thinks a harmless bush is a person | Sudden unnecessary stop |

| False Negative | Misses a cyclist in a lane | Failure to yield, potential crash |

Car developers try to reduce both, but hitting the perfect balance is tricky. It takes lots of testing, better training data, and sometimes more advanced sensors to cut down on mistakes.

In a nutshell: getting autonomous vehicle cameras to see as well as people do—especially in unpredictable situations—isn’t easy, and plenty of challenges still need solving before they’re totally road ready.

The Future of Autonomous Vehicle Cameras

So, what’s next for the cameras that act as the eyes of self-driving cars? It’s pretty exciting stuff, honestly. We’re talking about systems that can process information almost instantly and capture images so fast they’d make your head spin. The market for these automotive vision systems is really set to take off.

Real-Time Data Processing and Decision-Making

Right now, a lot of the heavy lifting for processing camera data happens on the vehicle itself. But the future is leaning towards even faster, more efficient processing. Think about it: the quicker a car’s ‘brain’ can understand what its ‘eyes’ are seeing, the faster it can react. This means fewer delays in making critical driving decisions, which is obviously a big deal for safety.

Here’s a quick look at how it’s evolving:

- Edge Computing: More processing power will be built directly into the cameras or nearby chips on the vehicle. This cuts down on the time it takes to send data back and forth.

- AI Acceleration: Specialized hardware and smarter AI algorithms will crunch the visual data much faster, allowing for quicker identification of everything from pedestrians to road signs.

- Predictive Analysis: Future systems might not just see what’s happening now, but also predict what’s likely to happen next based on current visual cues. This could mean anticipating a pedestrian stepping out or another car changing lanes.

High-Speed Image Capture and Analysis

Driving isn’t always a slow, leisurely cruise. When cars are moving fast, their cameras need to keep up. Capturing clear, sharp images of fast-moving objects without blur is a major focus. It’s like trying to take a clear photo of a hummingbird in flight – tricky!

- Faster Shutter Speeds: Cameras will be able to capture images in fractions of a second, freezing motion to get a clear picture.

- Improved Sensor Technology: New sensors will be better at handling rapid changes in light and movement, reducing image noise and distortion.

- Advanced Image Stabilization: Sophisticated software and hardware will work together to counteract vibrations and movement, ensuring a steady, clear view even on bumpy roads or at high speeds.

The Growing Market for Automotive Vision

All these advancements aren’t just happening in labs; they’re fueling a massive growth in the automotive vision market. Experts predict this market will nearly double in size over the next decade, expanding beyond just self-driving cars to other areas of transportation too.

| Market Segment | 2022 Value (Estimated) | 2032 Value (Projected) |

|---|---|---|

| Automotive Vision | $11.9 Billion | $22.76 Billion |

This growth shows just how important cameras and the technology behind them are becoming for the future of how we get around.

The Road Ahead

So, where does all this leave us? It’s pretty clear that cameras are a huge deal for self-driving cars. They’re basically the eyes of the vehicle, letting it see and understand everything around it, from lane lines to other cars and even people. While there are still some tricky bits to sort out, like making sure cameras work perfectly in bad weather or bright sun, the progress is undeniable. These systems are getting smarter all the time, and they’re key to making cars safer and driving a lot less stressful for everyone. It feels like we’re really on the verge of something big, and cameras are leading the charge.