So, you’ve probably heard about CPU caches before. They’re like little speed boosters for your computer, holding onto data your processor needs quickly. We usually talk about L1, L2, and L3 caches, but there’s another level that’s been popping up more often: the L4 cache. What exactly is this L4 cache, and does it actually make a difference in how fast your computer runs? Let’s break it down.

Key Takeaways

- The CPU cache hierarchy, with its L1, L2, and L3 levels, is designed to reduce the time it takes for the processor to access data, helping to overcome the ‘memory wall’.

- L4 cache is an additional, typically larger and slower, cache level that sits beyond the L3 cache, often using eDRAM technology.

- The inclusion of an L4 cache can improve performance by providing faster access to data than main memory, especially for certain types of tasks.

- Cache design involves understanding concepts like cache lines (the basic unit of data transfer) and associativity, which affects how data is stored and retrieved.

- As Moore’s Law slows, technologies like L4 caches are becoming more common, representing an ongoing evolution in CPU memory systems to boost performance.

Understanding the CPU Cache Hierarchy

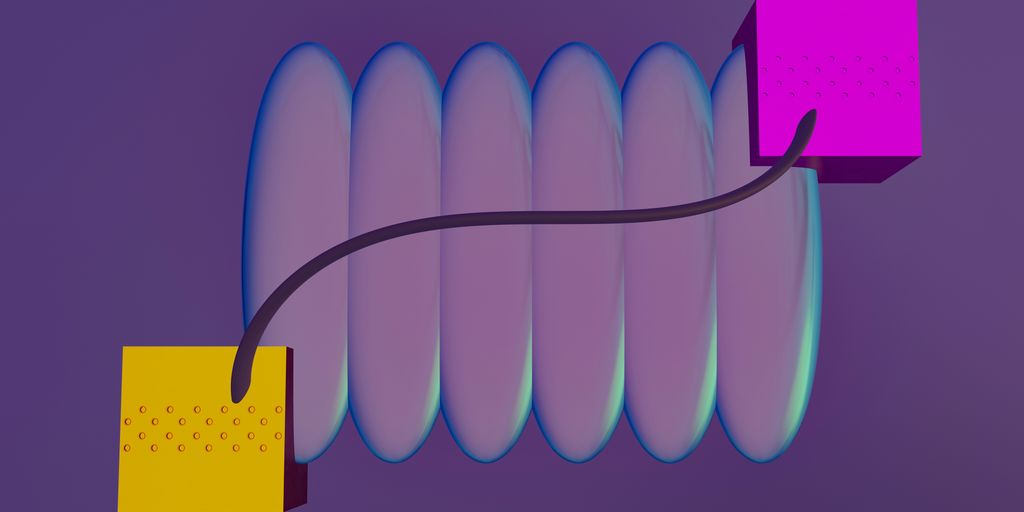

Think of your CPU like a chef in a busy kitchen. The chef needs ingredients constantly, but the main pantry (your computer’s main memory, or RAM) is a bit of a walk away. To speed things up, the chef keeps frequently used items like spices, oils, and common vegetables right on the counter or in small drawers nearby. These are like your CPU caches.

The Role of Caches in Combating the Memory Wall

Computers have a problem called the "memory wall." Basically, processors have gotten incredibly fast, but main memory (RAM) hasn’t kept pace. This means the CPU often has to wait around for data to arrive from RAM, which is like our chef waiting for ingredients to be delivered from the pantry. Caches are small, super-fast memory areas located right on or very close to the CPU. They store copies of data that the CPU is likely to need soon. When the CPU needs data, it checks the cache first. If the data is there (a "cache hit"), it gets it almost instantly. If not (a "cache miss"), it has to go to RAM, which takes much longer. This is why caches are so important for overall computer speed. Keeping frequently accessed data close by dramatically reduces waiting times.

Levels of Cache: L1, L2, and L3

Modern CPUs don’t just have one cache; they have a hierarchy, like different levels of storage for our chef.

- L1 Cache: This is the smallest and fastest cache, located directly within each CPU core. It’s like the chef’s immediate workspace, holding the most critical ingredients. L1 is usually split into two parts: one for instructions the CPU needs to execute and one for the data it’s working with. Sizes are typically quite small, often around 32KB to 64KB per core.

- L2 Cache: This is a bit larger and slightly slower than L1. It’s like a small fridge or a shelf right next to the chef’s main workspace. L2 caches are often dedicated to individual cores but are larger, maybe a few hundred KB to a couple of megabytes. They store data that’s used frequently but not quite as immediately as L1 data.

- L3 Cache: This is the largest and slowest of the on-chip caches. It’s usually shared among all the CPU cores. Think of it as a communal prep station in the kitchen, holding ingredients that multiple chefs might need. L3 caches can be several megabytes in size (e.g., 6MB, 8MB, or more).

Cache Size and Latency Trade-offs

There’s a constant balancing act in designing caches. Bigger caches can hold more data, which means fewer cache misses. However, making caches larger also makes them slower to access (higher latency) and takes up more space on the CPU chip, which drives up manufacturing costs. It’s a trade-off: more capacity versus faster access. The goal is to find the sweet spot where the cache is large enough to be effective but still fast enough to make a real difference. For instance, accessing L1 cache might take just a few CPU cycles, while L3 might take around 20-30 cycles, and RAM could take over 100 cycles. You can see why having those faster levels is so beneficial. Keeping your system running smoothly often involves basic maintenance, like using tools to clean out junk files.

Here’s a general idea of how the levels compare:

| Level | Typical Size | Typical Latency (Cycles) | Location |

|---|---|---|---|

| L1 | 32-64 KB | 3-5 | Per Core |

| L2 | 256 KB – 2 MB | 10-20 | Per Core |

| L3 | 4-16 MB | 20-40 | Shared |

| RAM | 4+ GB | 100+ | Motherboard |

This hierarchy helps the CPU get the data it needs as quickly as possible, minimizing those frustrating waits and keeping everything running smoothly.

The Emergence of L4 Cache

So, we’ve talked about L1, L2, and L3 caches, which are pretty standard these days. But sometimes, even those aren’t enough to keep up with how fast CPUs want to work. That’s where L4 cache comes in. Think of it as an extra helper, a bit further out than the main L3 cache, but still way faster than grabbing data from your main system RAM.

What is L4 Cache?

Basically, L4 cache is another layer in the memory hierarchy. It’s not as common as the other levels, but when it’s there, it acts as a buffer between the CPU’s main caches (L1, L2, L3) and the system’s main memory (RAM). It’s often built using a different type of memory, like eDRAM (embedded DRAM), which can be faster than the DRAM used for main memory, though usually slower than the SRAM used for L1, L2, and L3 caches. This makes it a good middle ground.

L4 Cache as an Extension of the Hierarchy

Adding an L4 cache extends the traditional cache structure. Instead of the CPU having to go all the way to main memory when it misses in L3, it can check the L4 cache first. This can significantly cut down on the time it takes to fetch frequently used data that doesn’t quite fit into the smaller, faster L3 cache. It’s like having a small, quick-access filing cabinet right next to your desk, in addition to the books already on your desk.

Here’s a rough idea of how the speeds and sizes might stack up:

| Level | Typical Size | Typical Latency (Cycles) | Location |

|---|---|---|---|

| L1 Cache | 32 KB | 4 | Inside each core |

| L2 Cache | 256 KB | 12 | Beside each core |

| L3 Cache | 6 MB | ~21 | Shared by cores |

| L4 Cache | 128 MB | ~58 | Separate eDRAM chip |

| RAM | 4+ GB | ~117 | Motherboard DIMMs |

Crystalwell eDRAM and L4 Cache

One of the more well-known implementations of L4 cache came with Intel’s "Crystalwell" technology, which used eDRAM. This was often found in their Iris Pro graphics integrated into CPUs. By putting a fairly large chunk of fast eDRAM on the same package as the CPU, Intel could create a substantial L4 cache. This cache-only memory was mounted to boost system performance, acting as a traditional core layer within the memory hierarchy. It was particularly helpful for integrated graphics, giving them a much larger and faster pool of memory to work with than typical system RAM, reducing stuttering and improving frame rates in games and other graphics-intensive tasks.

Performance Implications of L4 Cache

So, we’ve talked about what L4 cache is and how it fits into the whole CPU cache picture. But why should you actually care? Well, it all comes down to speed, or more accurately, how much faster your computer can get things done.

Impact of L4 Cache on Latency

Think of latency as the delay between asking for something and actually getting it. For your CPU, this means the time it takes to fetch data from memory. Even a few extra clock cycles of delay can really slow things down, especially in code that jumps around a lot, like when you’re chasing pointers through data structures. L1 cache is super fast, usually only a few cycles, but if the data isn’t there, the CPU has to go further out, to L2, then L3, and eventually to main memory. Each step adds more delay. L4 cache, sitting between the CPU’s main caches and main memory, acts like a faster, closer stop. By holding more frequently accessed data than L3, it can significantly cut down those extra trips, making the whole process snappier.

Bridging the Gap Between CPU and Main Memory

Main memory (RAM) is way slower than any of the on-chip caches. It’s like the difference between grabbing a tool from your workbench (L1) versus having to walk to the shed to get it (main memory). L2 and L3 caches help, but they can only hold so much. L4 cache, especially when it’s a larger, dedicated chunk of fast memory like eDRAM, can act as a much bigger intermediate step. This means the CPU doesn’t have to go all the way to the slow main memory as often. It’s like having a really well-organized toolbox right next to your workbench, reducing those long walks to the shed. This is particularly helpful for tasks that need a lot of data, where the difference between accessing L3 and main memory is a big deal.

Benefits for Specific Workloads

While L4 cache can help a lot of different programs, some really benefit more than others. Think about applications that deal with massive amounts of data or require complex calculations. Things like:

- Video editing and rendering: These tasks often involve moving and processing huge video files, where quick access to data is key.

- Scientific simulations: Running complex models, like weather forecasting or molecular dynamics, requires crunching massive datasets.

- High-end gaming: Modern games load large textures and game assets, and faster data retrieval means smoother gameplay with fewer hitches.

- Database operations: Complex queries and large database lookups can see a noticeable speed boost if the data is readily available in the L4 cache.

Basically, any workload that is

Technical Aspects of Cache Design

So, how does all this cache magic actually work under the hood? It’s not just a big pile of fast memory; there are some clever design choices that make it tick. Let’s break down a few key concepts.

Cache Lines: The Unit of Data Transfer

Think of the cache not as storing individual bytes, but rather chunks of data. These chunks are called "cache lines," and they’re the smallest unit of information that moves between the main memory and the cache. When your CPU needs a piece of data, it doesn’t just grab that single byte; it fetches the entire cache line it belongs to. This is based on the idea of spatial locality – if you need one piece of data, you’ll probably need the data right next to it soon. A typical cache line size might be 64 bytes. This means that when a cache miss occurs, 64 bytes of data are transferred from RAM, not just the single byte that was initially requested.

Cache Conflicts and Associativity

Now, here’s where things get a bit tricky. A cache can’t just magically store data from any memory address anywhere it wants. To keep lookups fast, there are rules about where a piece of data from main memory can go in the cache. The simplest approach is a "direct-mapped" cache, where each memory address can only go into one specific spot in the cache. The problem? If two pieces of data that your program needs frequently happen to map to the same cache spot, you get a "cache conflict." This causes something called "thrashing," where the cache keeps having to swap data in and out of main memory, completely negating its speed advantage. It’s like having a very small desk where only one specific file folder can go, and if you need two different folders that are supposed to go in that one spot, you’re constantly shuffling them around.

To combat this, we have "associativity." Instead of a memory address mapping to just one cache spot, it can map to a small set of spots. A "2-way set-associative" cache means a memory address can go into one of two possible locations. A "4-way" means four locations, and so on. This gives the cache more flexibility. If a conflict arises, it can try putting the new data in a different available spot within its allowed set. This reduces those nasty thrashing scenarios. The trade-off is that more associativity means more complex circuitry to check multiple locations, which can slightly increase latency.

Here’s a quick look at how associativity affects potential storage:

| Cache Type | Locations per Memory Address | Complexity | Conflict Likelihood |

|---|---|---|---|

| Direct-Mapped | 1 | Low | High |

| 2-Way Set-Associative | 2 | Medium | Medium |

| 4-Way Set-Associative | 4 | Higher | Lower |

| Fully Associative | All | Very High | Very Low |

Virtually-Indexed Physically-Tagged Caches

This is a bit more technical, but it’s a common optimization. When the CPU looks for data in the cache, it needs to know where to look and what data it’s looking for. It can use either the virtual address (how the program sees memory) or the physical address (how the hardware sees memory) to do this. Using virtual addresses for indexing is faster initially, but it can cause problems when the operating system switches between different programs (context switching), as the same virtual addresses might point to different physical memory locations. Using physical addresses for tagging means the CPU has to translate the virtual address to a physical one before it can even check the cache tags, which adds delay. A clever solution is the "virtually-indexed, physically-tagged" (VIPT) cache. Here, the cache lookup starts using the virtual address (for speed), but the final check to make sure it’s the right data uses the physical address. This is often done in parallel, so the translation happens at the same time as the initial cache lookup, minimizing the performance hit. It’s a way to get the best of both worlds, balancing speed and accuracy, much like how technology startups need to balance innovation with legal considerations like copyright ownership.

Understanding these design elements helps explain why different CPUs perform differently, even with similar core counts or clock speeds. It’s all about how efficiently they can access the data they need.

The Future of CPU Caching

So, where are we headed with all this cache stuff? It’s pretty clear that the need for faster data access isn’t going away. In fact, with more cores and more complex tasks, the pressure on the memory system is only going to increase.

L4 Caches Becoming More Prevalent

We’ve seen how L4 cache, often using eDRAM, can act as a buffer between the CPU and main memory. Think of it like a really fast express lane for data that’s used frequently but doesn’t quite fit into the L3 cache. As processors get more powerful and the gap between CPU speed and RAM speed continues to be a challenge, expect to see L4 caches showing up more often, especially in high-performance computing and integrated graphics scenarios. It’s a way to squeeze more speed out of the existing architecture without a complete redesign.

Innovations in Memory Technology

Beyond just adding more cache levels, there’s a lot of work going into making memory itself faster and more efficient. We’re talking about things like stacked memory, where memory chips are piled on top of each other, or high-bandwidth memory (HBM) which uses a much wider interface to move data around. These technologies aim to increase the sheer amount of data that can be transferred at once, which is just as important as reducing the time it takes to get that first piece of data (latency).

The Evolving Memory Hierarchy

Ultimately, the whole memory hierarchy is always changing. It’s not just about CPU caches anymore. We’re seeing tighter integration between different components, like putting the memory controller directly on the CPU die, which we’ve already discussed. The goal is to make the entire path from data storage to the processing cores as smooth and quick as possible. It’s a constant balancing act between cost, power, size, and speed, and the way we design these systems will keep evolving to meet the demands of future computing. It’s kind of like trying to build a super-efficient highway system for data, and they’re always looking for better ways to manage traffic flow.

So, What’s the Takeaway?

Alright, so we’ve talked a lot about these different cache levels, from the super-fast L1 right up to the bigger L3, and even touched on L4. It might seem like a lot of detail, but it all boils down to one thing: speed. The closer data is to the CPU cores, the faster it can be accessed. Think of it like having your most-used tools right on your workbench (L1), a few more in a nearby toolbox (L2), and the rest in a bigger cabinet across the room (L3). While L4 is a bit newer and not on every chip, it’s another step in trying to keep data close. Ultimately, how well your CPU handles tasks, especially when dealing with lots of data, really depends on how efficiently it can grab what it needs from these caches. It’s a big reason why newer processors feel so much snappier, even if the clock speeds haven’t gone through the roof like they used to.

Frequently Asked Questions

What is a CPU cache?

Think of a CPU cache as a super-fast scratchpad for your computer’s brain, the CPU. It holds tiny bits of information that the CPU uses a lot, so it doesn’t have to go all the way to the main memory (RAM) every single time. This makes your computer run much quicker.

Why are there different levels of cache like L1, L2, and L3?

It’s like having different places to store things. L1 is the smallest and fastest, right next to the CPU’s core, like having important notes right on your desk. L2 is a bit bigger and slower, like a drawer next to your desk. L3 is even bigger and a bit slower still, like a filing cabinet nearby. The CPU checks L1 first, then L2, then L3, before going to the main memory, trying to find what it needs as quickly as possible.

What is L4 cache and how is it different?

L4 cache is like an extra, even bigger storage area. It’s not always present, but when it is, it’s usually a separate chip with a lot more space than L1, L2, or L3. It acts as a buffer between the CPU and the main memory, helping to speed things up even more, especially when the CPU needs to access a lot of data.

How does cache size affect performance?

A bigger cache can hold more data, which means the CPU is more likely to find what it needs without waiting for the slower main memory. However, making caches bigger also makes them slower and take up more space on the chip. So, designers have to find a balance – a ‘sweet spot’ – to get the best performance.

What is a ‘memory wall’?

The ‘memory wall’ is a term used to describe the growing speed difference between how fast CPUs can process information and how fast they can get that information from the main memory (RAM). Caches are the main way we try to overcome this problem, by keeping frequently used data closer to the CPU.

Why is cache important for everyday computer use?

Even for simple tasks like browsing the web or typing a document, your CPU is constantly fetching and using small pieces of data. Caches make these operations much faster. Without them, your computer would feel very sluggish because the CPU would spend most of its time just waiting for data to arrive from the slower main memory.