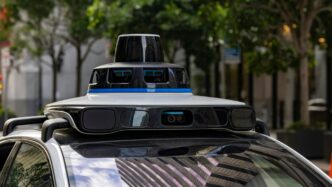

The Indispensable Role of Cameras in Autonomous Vehicle Perception

When you think about how we humans get around, our eyes are pretty much the main event, right? We see things, we understand them, and we react. Self-driving cars are trying to do the same thing, and cameras are a big part of that. They’re like the car’s eyes, helping it figure out what’s going on around it.

Mimicking Human Vision for Superior Object Recognition

Cameras are fantastic because they see the world a lot like we do. They capture detailed images, which means they can spot all sorts of things – other cars, pedestrians, cyclists, you name it. This ability to recognize objects visually is a huge advantage. Unlike some other sensors that just see shapes or distances, cameras can actually identify what something is. This visual recognition is key for making smart driving decisions.

Interpreting Road Markings and Signage

Beyond just spotting other vehicles, cameras are also really good at reading the road itself. Think about lane lines, stop signs, speed limit signs, and traffic lights. Cameras can see these details and understand what they mean. This is super important for a car to follow the rules of the road and stay in its lane. It’s like the car can read the road signs just like a human driver would.

Cost-Effectiveness and High Resolution

Another big plus for cameras is that they’re generally more affordable than some other advanced sensors, like LiDAR. Plus, they offer really high-resolution images. This means they can pick up on fine details that might be missed otherwise. So, you get a lot of visual information without breaking the bank, which is a win-win for making self-driving tech more accessible.

Leveraging Stereo Vision for Enhanced Depth Perception

Think about how you see the world. You have two eyes, right? They look at things from slightly different angles, and your brain uses that difference to figure out how far away stuff is. Stereo vision for self-driving cars works on pretty much the same idea. It uses two cameras, spaced a bit apart, to get two different views of the same scene. This setup is way cheaper than some other fancy sensors, and it’s really good at giving the car a sense of depth.

Understanding Stereo Vision Principles

At its core, stereo vision is about using two cameras to mimic human eyesight. These cameras are positioned side-by-side, with a known distance between them, often called the ‘baseline’. When they both look at the same object or scene, each camera captures a slightly different image. The magic happens when the car’s computer compares these two images. It looks for matching points or features in both pictures. Because the cameras are in different spots, these matching points will appear in slightly different locations in each image. The amount of difference, or shift, between these points is what tells the system how far away the object is. The bigger the shift, the closer the object. It’s a bit like how your finger looks like it jumps when you close one eye and then the other.

Calculating Object Distances with Stereo Cameras

So, how does the car actually figure out the distance? It’s all about geometry, specifically something called triangulation. Imagine drawing lines from each camera’s lens to a specific point on an object. Because the cameras are separated, these lines form a triangle. By knowing the distance between the cameras (the baseline) and measuring the angle or the shift of that point in the two images, the system can calculate the length of the other sides of the triangle, which directly gives it the distance to the object. This process can be done for many points in the scene, creating a ‘depth map’ that shows how far away everything is.

Here’s a simplified look at the process:

- Capture Images: Two cameras simultaneously take pictures of the same scene.

- Find Correspondences: The system identifies the same physical point in both images.

- Measure Disparity: It calculates the horizontal difference (disparity) in the pixel location of that point between the left and right images.

- Triangulate: Using the baseline distance and the disparity, it calculates the 3D distance to the point.

The Importance of Disparity Mapping

Disparity mapping is the result of that calculation process. It’s essentially a map where each pixel’s value represents the calculated distance to that point in the scene. A dense disparity map, which is what self-driving cars aim for, provides distance information for almost every pixel in the image. This is super useful because it doesn’t just tell the car that there’s an object; it tells it exactly how far away that object is. This detailed depth information is critical for tasks like:

- Obstacle Avoidance: Knowing the precise distance to a pedestrian or another car allows for safer braking or steering.

- Lane Keeping: Understanding the distance to lane markers helps the car stay centered.

- Path Planning: Mapping out the 3D space around the vehicle helps in deciding the safest route forward.

Advanced Capabilities of Camera Systems for Self-Driving Cars

3D Geometry Reconstruction from Images

Cameras aren’t just for taking pretty pictures; they can actually help a self-driving car build a 3D model of its surroundings. By using techniques like stereo vision, where two cameras work together like our own eyes, the car can figure out how far away things are. This isn’t just about knowing if a car is close or far; it’s about understanding the shape and form of objects. Think of it like putting together a puzzle, but instead of cardboard pieces, it’s points of light and color that the car uses to create a digital replica of the world. This 3D map helps the car understand the environment much better than just seeing flat images.

Obstacle Detection and Localization

Once the car has a good grasp of the 3D world, it can get really good at spotting things in its path. This includes not just other cars and trucks, but also pedestrians, cyclists, and even smaller things like traffic cones. The system can pinpoint exactly where these obstacles are in relation to the car. This is super important for making safe decisions, like when to brake or change lanes. It’s like having a super-powered lookout that never blinks.

- Identifying various object types: Cars, trucks, pedestrians, cyclists, animals.

- Determining object position: Precise location in 3D space.

- Estimating object size and shape: Understanding what the object is.

- Tracking object movement: Predicting where an object will go next.

Visual Navigation and Mapping

Cameras play a big part in helping the car know where it is and where it’s going. By looking at landmarks, road signs, and lane markings, the car can create maps of the area or compare what it sees to pre-existing maps. This visual information helps the car navigate through complex city streets or even find its way in parking lots. It’s like the car has a built-in GPS that uses what it sees to guide itself. This visual mapping is key for tasks like automated parking or following a specific route without getting lost.

Addressing Challenges in Camera-Based Perception

Even though cameras are super useful for self-driving cars, they aren’t perfect. They run into some tricky situations that can make it hard for the car to ‘see’ properly. Think about driving on a sunny day – glare off wet roads or shiny car bumpers can really mess with what the camera picks up. It’s like trying to read a book with a bright light shining right on the page. These visual "blind spots" are a major hurdle.

Handling Reflective and Transparent Surfaces

Shiny things like puddles, chrome trim, or even tinted windows can bounce light around in ways that confuse camera systems. The car might see a reflection of a car that isn’t actually there, or it might not see a pedestrian walking behind a glass door. It’s a tough problem because the camera is trying to interpret light, and these surfaces bend and scatter light in unpredictable ways. Developers are working on algorithms that can learn to distinguish between real objects and their reflections, but it’s a complex task.

Overcoming Occlusions and Thin Structures

Sometimes, objects get hidden behind other things – that’s an occlusion. A pedestrian stepping out from behind a parked van, for example. Cameras can also struggle with really thin objects like poles, wires, or even lane markers that are partially worn away. If an object is only partially visible, or if it’s very narrow, the camera might miss it entirely or misinterpret its shape and size. This is where combining camera data with other sensors, like radar, becomes really important. Radar can often

The Synergy of Cameras and Other Sensors

So, cameras are pretty neat for seeing things like humans do, right? They’re good at spotting signs and lane lines. But, let’s be real, relying on just one type of sensor for a self-driving car is like trying to cook a fancy meal with only a spoon. You need a whole set of tools. That’s where other sensors come into play, and how they work together with cameras makes the whole system way more robust.

Complementing LiDAR and Radar Data

Cameras are great for recognizing what something is – is that a pedestrian, a bicycle, or just a weirdly shaped bush? But figuring out exactly how far away that pedestrian is, or their precise speed, can be tricky for cameras alone, especially in bad weather or low light. This is where LiDAR and radar step in. LiDAR uses lasers to create a detailed 3D map of the surroundings, giving super accurate distance measurements. Radar, on the other hand, is fantastic at seeing through fog, rain, and snow, and it’s excellent at measuring speed. By combining camera data with LiDAR and radar, the car gets a much clearer, more reliable picture of its environment. Think of it like this:

- Cameras: Identify objects (what is it?).

- LiDAR: Measure distances and shapes precisely (how far away and what size is it?).

- Radar: Detect objects and their speed, even in bad conditions (is it moving, and how fast?).

Achieving Comprehensive Environmental Awareness

When you put all these sensors together, the car isn’t just seeing; it’s truly aware. It can build a detailed, multi-layered understanding of everything happening around it. This means it can:

- Confirm detections: If a camera sees something that looks like a car, and radar confirms a moving object at that location with a certain speed, the system can be much more confident it’s actually a car. This reduces false positives.

- Fill in the gaps: If a camera’s view is blocked by a truck, radar might still pick up a car approaching from behind it. LiDAR can help map the shape of the truck, giving the car context.

- Understand complex scenes: Imagine a busy intersection. Cameras see the traffic lights, signs, and pedestrians. Radar tracks approaching vehicles. LiDAR maps the geometry of the intersection and the precise location of everything. This combined input allows the car to make much smarter decisions.

Enhancing Safety in Diverse Conditions

No single sensor works perfectly all the time. Cameras struggle with glare from the sun, dark tunnels, or heavy rain. LiDAR can have issues with certain surfaces that absorb laser light, and radar can sometimes have trouble distinguishing between different objects that are close together. But when they work as a team, these weaknesses become strengths. The redundancy built by using multiple sensor types means that if one sensor is having a bad day, the others can pick up the slack. This layered approach is absolutely key to making self-driving cars safe and reliable, no matter what the weather or lighting conditions are like. It’s all about building a system that can handle pretty much anything the road throws at it.

Technical Foundations of Stereo Camera Systems

The Necessity of Camera Calibration

Before we can even think about figuring out distances, we need to get our cameras talking to each other properly. This is where calibration comes in. Think of it like tuning two instruments before a duet – they need to be in sync. Calibration is all about understanding the specific quirks of each camera and how they relate to each other. We’re talking about figuring out things like the focal length, the lens distortion, and crucially, the exact position and orientation of one camera relative to the other. Without this, any distance measurements we try to make will be way off. It’s a bit like trying to measure something with a ruler that’s bent – you’re just not going to get an accurate result.

Understanding Epipolar Geometry

Once the cameras are calibrated, we can start talking about epipolar geometry. This sounds fancy, but it’s really just a way to describe the relationship between two cameras looking at the same scene. Imagine you have a point in the real world. When you look at it with your left eye, it appears in a certain spot in your vision. When you look at it with your right eye, it appears in a slightly different spot. Epipolar geometry helps us understand this relationship mathematically. It defines a plane that contains the 3D point and the optical centers of both cameras. The intersection of this plane with the image planes of the cameras gives us lines, called epipolar lines. Any point in the left image must have its corresponding point in the right image lying on its epipolar line. This significantly narrows down where we need to look for matching points, making the whole process much more efficient.

The Triangulation Principle for Depth Estimation

So, we’ve calibrated our cameras, and we understand epipolar geometry. Now, how do we actually get the distance? This is where triangulation comes in, and it’s the core idea behind stereo vision. Remember those epipolar lines? If we find a specific feature (like a corner or a distinct texture) in the left image, and then find its corresponding feature in the right image along the epipolar line, we have two rays of light originating from that 3D point and passing through the two camera centers. Because we know the exact distance between the cameras (the baseline) and the angles of these rays, we can use basic trigonometry to calculate where these rays intersect in 3D space. This intersection point tells us the actual 3D location of the feature, and thus, its distance from the car. It’s like using two rulers held at different angles to pinpoint the location of an object in a room.

Wrapping Up: Cameras and the Road Ahead

So, when you think about self-driving cars, it’s easy to get caught up in all the fancy tech. But really, the cameras are doing a lot of the heavy lifting when it comes to seeing the world. They’re like the car’s eyes, spotting everything from a pedestrian stepping out to a traffic light changing. While other sensors have their place, cameras are often the most budget-friendly way to get a really detailed picture, much like how we humans see. They help the car understand distances and identify objects, which is super important for making safe decisions on the road. As this technology keeps getting better, cameras will continue to be a major player in making self-driving cars a reality we can all trust.