Understanding The Core Of Robotics Meaning

When we talk about robotics, it’s easy to picture those futuristic robots from movies, right? The ones that do everything from complex surgery to just tidying up the house. But the reality of robotics today is a bit more grounded, and honestly, a lot more interesting than just sci-fi dreams. It’s about building machines that can interact with the physical world, and that’s way harder than it sounds.

Defining Robotics: Beyond The Sci-Fi

So, what exactly is robotics? At its heart, it’s the field that deals with the design, construction, operation, and application of robots. But it’s not just about the physical machine. It’s also about the intelligence that drives it – how it senses its surroundings, makes decisions, and acts upon them. Think about it: even a simple robot arm in a factory needs to know where to grab a part, how to pick it up without dropping it, and where to place it next. That’s a lot of coordination.

Moravec’s Paradox And Embodied Intelligence

There’s this funny thing in robotics called Moravec’s Paradox. It basically says that things we humans find easy, like picking up a cup or walking around, are incredibly difficult for robots to master. Conversely, things we find hard, like solving complex math problems or playing chess, are often easier for computers and robots to learn. This is tied to the idea of ’embodied intelligence’ – the notion that true intelligence develops through physical interaction with the world. Our brains and bodies have evolved over millions of years to handle sensory input and motor control, something that’s tough to replicate in code and circuits. It’s the difference between knowing the notes of a song and actually playing it with feeling.

The Evolution Of Robotics Development

Robotics development has been chugging along for decades. While artificial intelligence, especially things like large language models, has seen explosive growth, robotics has progressed more steadily. Early efforts focused on automating repetitive tasks in controlled environments, like assembly lines. Now, we’re pushing towards robots that can handle unpredictable situations, work alongside people, and understand more nuanced instructions. This evolution is driven by advances in sensors, computing power, and algorithms, but also by a growing understanding that robots need to be more adaptable and aware of their surroundings to be truly useful in our messy, real world.

Key Components Shaping Robotics

So, what actually makes a robot tick? It’s not just one thing, but a combination of parts working together. Think of it like building a complex machine; you need the right pieces in the right places. We’re talking about the physical stuff, the way it senses the world, and how it figures out what to do.

Hardware Architecture and Design

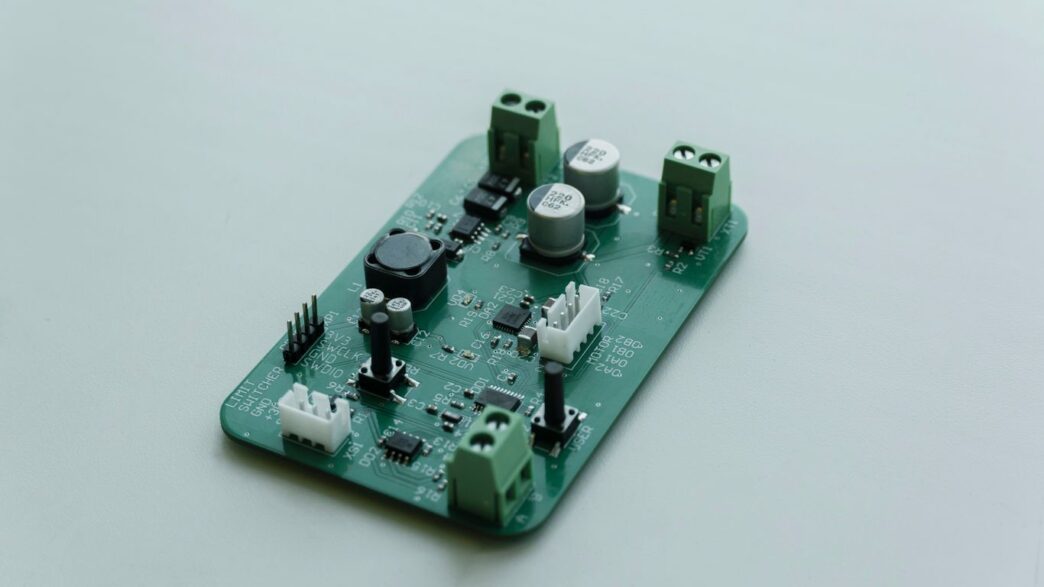

This is the robot’s skeleton and brain. For the ‘brain’ part, robots often need a mix of processors. You’ve got your standard CPUs for general tasks, but then there are GPUs, which are super fast at handling lots of data at once – think processing video feeds. And sometimes, you’ll find DSPs, which are specialized for quick, real-time number crunching, like processing sensor data instantly. Getting these to work together smoothly is key to making the robot perform well without using too much power.

When robots are built for specific jobs, like flying drones or self-driving cars, they get special hardware. Drones need to be light and have things like GPS and gyroscopes to stay stable. Self-driving cars pack in LiDAR, radar, and cameras, plus powerful computers to make sense of all that data and react fast.

And then there are the ‘muscles’ – the actuators and motors. These turn electricity into movement. For precise jobs, you’ll see servomotors that can be controlled very accurately. If a robot needs to push or lift something heavy, it might use hydraulic or pneumatic systems, though these can be more complicated to set up.

Sensors For Environmental Awareness

Just like we use our eyes and ears, robots need sensors to understand what’s around them. These are the robot’s senses. They can detect all sorts of things:

- Vision: Cameras that see like our eyes.

- Proximity: Sensors that tell the robot if something is close by.

- Lidar/Radar: These use light or radio waves to measure distances and map surroundings, even in the dark.

- Inertial Measurement Units (IMUs): These track the robot’s orientation and movement.

- Tactile Sensors: These give the robot a sense of ‘touch’.

The quality and type of sensors directly impact how well a robot can interact with its environment. Without good sensor data, a robot is essentially blind and deaf to the world.

Robot Perception: Navigation and Deep Learning

Okay, so the robot has sensors, but what does it do with all that data? That’s where perception comes in. It’s about making sense of the sensor inputs to build a picture of the world.

- Localization: Figuring out exactly where the robot is in its environment.

- Mapping: Creating a map of the surroundings, or using a pre-existing map.

- Object Recognition: Identifying different objects in its view – is that a wall, a person, or a table?

Deep learning, a type of AI, has really changed the game here. It allows robots to learn from vast amounts of data, getting better at recognizing patterns and making decisions. This is how robots can start to understand complex scenes and react more intelligently, moving beyond simple programmed responses. It’s a big step towards robots that can handle unpredictable situations more gracefully.

The Robotics Project Lifecycle

Building a robot isn’t just about slapping some parts together and hoping for the best. It’s a whole process, kind of like building a house or even just cooking a complicated meal. You’ve got to plan, get your materials, put it all together, and then make sure it actually works.

System Architecture and Component Selection

First off, you need a blueprint. This is where you figure out the big picture: what kind of robot are we building? What’s it supposed to do? This involves sketching out the overall structure, deciding on the main brains (the computer), how everything will talk to each other, and how data will flow. Then comes picking the actual parts. You can’t just grab any old sensor or motor; you need ones that fit the job, don’t use too much power, and won’t break the bank. It’s a balancing act, really.

3D Modeling and Hardware Assembly

Once you have a rough idea of the parts, you’ll probably want to model the robot in 3D. Think of it like a digital clay model. This helps you see how everything fits together, spot potential clashes, and even tweak the design before you start cutting metal or plastic. After the digital design is locked in, it’s time for the hands-on part: putting the hardware together. This means bolting things up, wiring up the electronics, and generally making the physical robot take shape.

Perception, Planning, and Control Development

This is where the robot starts to get smart. You’re writing the software that lets the robot see and understand its surroundings (perception), figure out what it needs to do next (planning), and actually move its parts to do it (control). This involves a lot of coding for things like making sure the robot knows where it is, spotting objects, and plotting a path without bumping into anything. It’s a complex dance between sensing the world and acting upon it.

Simulation, Testing, And Deployment

So, you’ve built your robot, or at least designed it. Now what? You can’t just throw it out into the world and hope for the best. That’s where simulation, testing, and deployment come in. It’s like test-driving a car before you buy it, but way more involved.

Virtual Environments For Robot Simulation

Before your robot even sees daylight, it gets a workout in a virtual world. Think of it as a digital playground where we can try out all sorts of scenarios without risking any actual hardware. Software like Gazebo is pretty popular for this. We take the robot’s 3D model, turn it into a special file (like a URDF), and then let it loose in the simulation. This lets us see how it might behave in the real world, predicting its actions in a safe, controlled space. It’s a good way to catch problems early, like if it’s going to bump into things or if its movements are all wrong.

Rigorous Testing Of Robotic Systems

Once the virtual tests are done, it’s time for the real deal. Testing isn’t just one big step; it’s broken down into smaller, manageable parts. We start with unit testing, making sure each little piece of the robot’s software works perfectly on its own. Then comes integration testing, where we check if these pieces play nicely together. Finally, system testing looks at the whole robot – hardware and software combined – to make sure it meets all the requirements. This includes checking if it performs as expected, if it’s safe to use, and if it’s reliable over time.

Here’s a quick look at the different testing levels:

- Unit Testing: Verifying individual software modules.

- Integration Testing: Checking how different components work together.

- System Testing: Evaluating the complete robot system against its goals.

Containerization And Real-World Deployment

Getting the robot’s software ready for the real world often involves containerization. Tools like Docker are used to package up all the software components. This makes sure that the robot’s brain works the same way whether it’s on a developer’s laptop, in a testing rig, or out in its final operating environment. After all that, the robot is finally deployed to its intended location. This careful, step-by-step process is what helps make sure complex robots can actually do their jobs reliably.

Advancements And Future Directions In Robotics

It feels like robotics is really hitting its stride right now, doesn’t it? We’re seeing a lot more than just the industrial arms we’ve known for years. The big news lately is how Artificial Intelligence is changing the game. Think about it: robots are getting smarter, learning from experience, and even starting to understand us better.

The Rise Of AI And Robot Learning

AI is making robots more adaptable. Instead of being programmed for one specific task, they can now learn and adjust. This is a huge step. We’re seeing this in areas like:

- Learning from Demonstration: Robots can watch a human perform a task and then try to replicate it. This is way more intuitive than writing complex code for every single move.

- Reinforcement Learning: Robots can learn through trial and error, figuring out the best way to achieve a goal by getting feedback on their actions. It’s like teaching a pet tricks, but with algorithms.

- Transfer Learning: A robot trained on one task can apply some of that knowledge to a new, similar task. This saves a lot of time and data.

Large Language Models In Robotics

And then there are Large Language Models (LLMs), like the ones powering chatbots. It might seem odd, but they’re starting to show up in robotics too. LLMs can help robots understand human instructions given in plain language, which is a massive leap from needing precise commands. Imagine telling your robot vacuum, "Clean up the mess in the kitchen, but avoid the rug." LLMs can help robots interpret that and act accordingly. They can also help robots reason about tasks and plan their actions in a more human-like way.

Industry Trends And Major Company Involvement

Big players are definitely jumping in. Companies like NVIDIA are putting a lot of effort into creating tools and hardware specifically for robots, especially humanoids. They’ve announced new chips and software platforms designed to make building and training robots easier. Tesla is still pushing forward with its Optimus robot, and other tech giants are exploring how robots could fit into their future product lines. It’s not just about the big names, though; there’s a lot of activity in startups too, with significant investments flowing into companies working on everything from self-driving technology to general-purpose robots. The whole field feels like it’s on the cusp of something big.

Careers And Opportunities In Robotics

Thinking about a career in robotics? It’s a field that’s really taking off, and honestly, it’s not just for super-geniuses in labs anymore. Lots of different jobs are popping up, and the industry is growing fast. It feels like every week there’s some big company announcing a new robot or a huge investment in the tech. So, what’s actually out there for someone interested in this stuff?

Defining Roles: Developer Versus Researcher

When you look at jobs in robotics, you’ll often see two main paths: developer and researcher. They sound similar, but they’re pretty different. A developer is usually focused on taking existing algorithms and making them work within a robot’s system. Think of it like being a skilled mechanic who knows how to put all the best parts together and make them run smoothly. You need to know how to integrate different software pieces and make sure everything communicates properly. It’s about implementation and making things happen.

On the other hand, a researcher is more about pushing the boundaries. They’re the ones trying to figure out entirely new ways for robots to do things, solving problems that haven’t been solved before. If you enjoy tackling tricky, open-ended challenges and coming up with novel solutions, research might be your thing. It’s less about putting pieces together and more about inventing new pieces.

Diverse Job Opportunities Across Industries

Robotics isn’t just about making factory arms or self-driving cars, though those are big parts of it. The applications are spreading out everywhere. You’ve got roles in:

- Manufacturing: Building and maintaining automated systems on assembly lines.

- Healthcare: Developing robots for surgery, patient care, or lab automation.

- Agriculture: Creating robots for planting, harvesting, or monitoring crops.

- Logistics: Designing robots for warehouses and delivery.

- Aerospace: Working on robots for space exploration or satellite maintenance.

- Service Industries: Robots for cleaning, customer service, or even personal assistance at home.

Each of these areas needs people who can build, program, and manage robotic systems. The specific job titles might vary a lot depending on the company and what they make. For example, you might be an Embedded Engineer writing C++ for a robot’s brain, an Electronic Engineer designing circuit boards, or a Perception Engineer working on how a robot ‘sees’ the world using cameras and sensors.

Essential Skills For Robotics Professionals

So, what kind of skills are actually useful if you want to get into this field? It’s a mix of technical know-how and problem-solving abilities. Here are a few key areas:

- Programming: Proficiency in languages like Python, C++, and sometimes ROS (Robot Operating System) is pretty standard. You’ll be writing code to control robot movements, process sensor data, and implement decision-making logic.

- Mathematics and Algorithms: A solid grasp of linear algebra, calculus, and algorithms is important, especially for motion planning, computer vision, and control systems. You don’t need to be a math whiz, but understanding the concepts helps a lot.

- System Integration: Robots are complex systems. Being able to understand how different parts – hardware, software, sensors, actuators – work together is a big plus. It’s about seeing the whole picture.

- Problem-Solving: This is huge. Robots don’t always work perfectly the first time (or the tenth time!). You need to be able to troubleshoot issues, figure out why something isn’t working, and come up with practical solutions. Being persistent and creative when facing challenges is probably one of the most important traits you can have.

- Teamwork: Most robotics projects involve multiple people with different specializations. Being able to communicate effectively and collaborate with others is key to success.

Wrapping Things Up

So, we’ve gone through a lot in this series, from the basic ideas behind robotics to how projects actually get built and what’s happening in research right now. It’s clear that robotics is a huge field, with lots of different parts working together. While we’re not quite at the sci-fi robot butler stage yet, things are moving fast. With big companies jumping in and communities sharing knowledge, it’s a pretty exciting time to be involved or just curious about robots. Whether you’re thinking about a career or just want to understand the tech better, there are tons of resources out there. Keep exploring, keep learning, and who knows what robots will be doing next!